serhii.net

In the middle of the desert you can say anything you want

-

Day 2704 (28 May 2026)

bttf for CLI time parsing and interesting help pattern

BurntSushi/bttf: A command line tool for datetime arithmetic, parsing, formatting and more.

I use wolframalpha for most of my casual date ops etc. but the project is cool and I may need it.

But the most interesting bit is the documentation.

Quoting README:

I may ship arbitrary and capricious breaking changes at this point. You have been warned. […] And it doesn’t give a hoot about POSIX (other than the

TZenvironment variable).And THIS. I either love it or hate it, can’t decide:

-h/--help This flag prints the help output for bttf. Unlike most other flags, the behavior of the short flag, -h, and the long flag, --help, is different. The short flag will show a condensed help output while the long flag will show a verbose help output.It breaks my usual expectations but damn it’s a cool pattern that I really want to be a thing! You’re allowed to break conventions if your thing is really smarter and you’re explicit and intentional about it.

-

Day 2695 (19 May 2026)

Merge and rearrange pages from multiple PDF

Tiny neat app that shows pages from all PDFs and allows you to rearrange the order, does one thing and does it wonderfully!

-

Day 2692 (16 May 2026)

FNP-2026 at LREC paper notes

- FNP – FNP 2026

- FNP 2026 proceedings PDF.

When Tables Go Crazy: Evaluating Multimodal Models on French Financial Documents

- Links

- [2602.10384] When Tables Go Crazy: Evaluating Multimodal Models on French Financial Documents

- Github contains both dataset and scripts!

- Benchmark French visual finance docs, includes evaluation on multiple VLMs

- Chart checkboxes graphs and weird edge cases specifically present

- Bits

- LLMs when asked to generate questions usually generate only questions they have answers to

CFQA: A Chinese Financial Question Answering Benchmark from Corporate Annual Reports

- Links:

- ZackZhu00/CFQA_Chinese_Finance_Question_Answering · Datasets at Hugging Face

- zhutianning/Hallucination-detection-for-RAG: This project aims to design and implement a hallucination detection and evaluation pipeline for Retrieval- Augmented Generation (RAG) systems processing multi-modal financial reports (text, tables, images/charts).

- Unclear relation:

- Takeaway

- RAG decreases hallucinations and improves fact extraction scores but not clear for other tasks involving reasoning, and decrease scores w/ some model[s

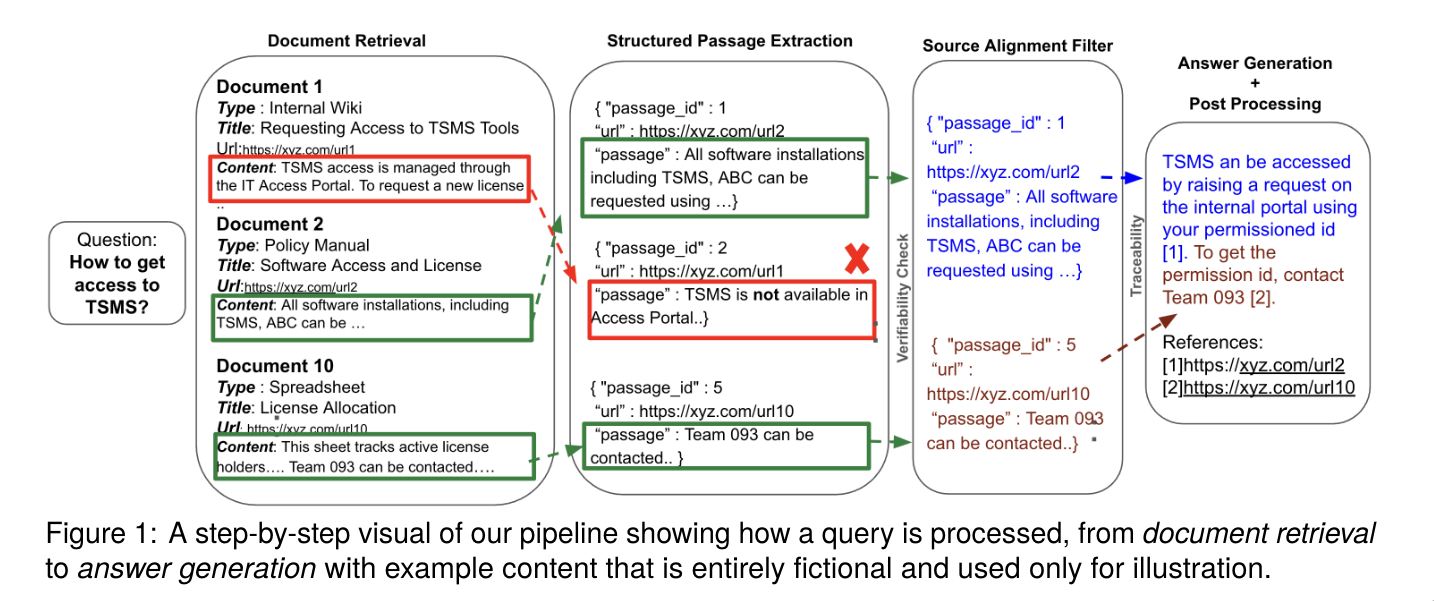

(!) Verifiable Financial Enterprise Question Answering via Inference-Time Grounding and Traceability

- LLMs grounding verifiable citations

- Framework, modular, real-time

- Verify citations and fix citation drift by looking at ovelaps/presence w/ source documents.

- Sentence-level citations allegedly lead to better groundedness

- TODO Many many interesting citations to parse

- Related paper I just found: [2604.23588] FinGround: Detecting and Grounding Financial Hallucinations via Atomic Claim Verification

-

Day 2690 (14 May 2026)

Prodigy NLP annotation tool

Prodigy · An annotation tool for AI, Machine Learning & NLP

First seen in Anshu Kiran Sharma - PhD Student Computer Science, Natural Language Processing’s “Once upon a kernel” @ LREC 2026

-

Day 2689 (13 May 2026)

Borda count

- Borda count - Wikipedia is a way to fairly rank, rating = number of candidates below them in ranking

- Originally for voting

- Used in IR to aggregate ratings and benchmarking models on tasks w/ different metrics

- First seen in [2510.20508] Assessing the Political Fairness of Multilingual LLMs: A Case Study based on a 21-way Multiparallel EuroParl Dataset

- BLEU variants not comparable across language pairs so used BC to rank

Related: Likert scale

- Borda count - Wikipedia is a way to fairly rank, rating = number of candidates below them in ranking

-

Day 2655 (09 Apr 2026)

xh notes

ducaale/xh: Friendly and fast tool for sending HTTP requests o

Shorthand

# from README xh http://localhost:3000/users # resolves to http://localhost:3000/users xh localhost:3000/users # resolves to http://localhost:3000/users xh :3000/users # resolves to http://localhost:3000/users xh :/users # resolves to http://localhost:80/users xh example.com # resolves to http://example.com xh ://example.com # resolves to http://example.com

Convert enum to dict in python

python - How to make a dict from an enum? - Stack Overflow

# Source - https://stackoverflow.com/a/60451617 # Posted by Chris Doyle # Retrieved 2026-04-09, License - CC BY-SA 4.0 from enum import Enum class Shake(Enum): VANILLA = "vanilla" CHOCOLATE = "choc" COOKIES = "cookie" MINT = "mint" dct = {i.name: i.value for i in Shake} print(dct)

-

Day 2647 (01 Apr 2026)

NiceGUI notes

NiceGUI is freaking awesome.

-

Resources

- NiceGUI Documentation that leads to nicegui/examples at main · zauberzeug/nicegui and is the most useful examples I’ve seen in any python project ever.

- There’s a FAQs · zauberzeug/nicegui Wiki that I found far too late

- Has bits about performance, long-running functions etc.

-

Useful examples

- search_as_you_type for updating a table based on

Misc

- ui.list | NiceGUI hows very pretty complex lists with sections!

- ui.icon | NiceGUI works for icons: Material Symbols & Icons - Google Fonts

- Tooltips can contain all other elements! ui.tooltip | NiceGUI

- All inputs, labels date etc: Input | Quasar Framework

- ui.radio | NiceGUI

props('inline')for inline, remove for vertical;denseexists - ui.number | NiceGUI makes binding and processing string ui.inputs easier!

Modularization

- Modularization example: nicegui/examples/modularization at main · zauberzeug/nicegui

- apirouter

- fn, class, etc.

# somewhere def create() -> None: @ui.page('/a') def page_a(): with theme.frame('- Page A -'): message('Page A') ui.label('This page is defined in a function.') from somewhere import create # Example 2: use a function to move the whole page creation into a separate file # in main.py function_example.create()Slots

OK I think I got it! In e.g. Select | Quasar Framework look for “slots” then you can use them thus1:

sel = ui.select( list(items), value=item, ) with sel.add_slot("after"): ui.button(icon="description").props("flat no-caps").on( "click", lambda e: ui.navigate.to(f"/bp/{whatever}"), )Table magic

table = ui.table()#... with table.add_slot("body-cell-ColumnName"): with table.cell("ColumnName"): # button w/ cell text ui.button().props(""" :innerHTML="props.value" """).on( "click", js_handler="() => emit(props.row.SomeColumnName)", handler=lambda e: ui.navigate.to(f"/sth/{e.args}"), # e.args is cell content ) # conditionals .props(""" flat no-caps :label="props.value !== 'N/A' ? props.value : ''" """)Link

… with button

with ui.link(target="/sd"): ui.button("Show", icon="description")… without underline

with ui.link(target=target_link).classes("no-underline"): ui.item_label("look ma no underline!")Multiple badges

Ref: Badge: Floating - Quasar Playground but that’s quasar-heavy. Strangely not mentioned in the official quasar docs either. After playing with this this is the python solution:

# remove the floating prop and add one of these classes. # Removing the prop is useful if using e.g. both top-left and top-right # then they are on an identicafl height ui.badge(faiss_dist_str, color="light-blue-10").props( # "floating" ).classes("absolute-top-left")Styling

- Quasar header classes: Typography | Quasar Framework

Events

ui.table(rows=rows).props("flat bordered").on( "row-click", lambda e: ui.navigate.to(f"/pr/{e.args[1]['ISIN']}"), )The row-click event comes from quasar: https://quasar.dev/vue-components/table#qtable-api

APIs

-

ui.run(..., fastapi_docs=True)to make documentation available -

nicegui/examples/api_requests/main.py at main · zauberzeug/nicegui

async def show_new_quote(): async with httpx.AsyncClient() as client: response = await client.get('https://zenquotes.io/api/quotes') quote = random.choice(response.json())['q'] label.text = f'“{quote}”'Snippets

Debugging long-running functions

From the FAQ:

# (..) app.on_startup(setup) # def setup(): # if CFG.debug_mode: import asyncio loop = asyncio.get_running_loop() loop.set_debug(True) loop.slow_callback_duration = 0.05Uploading files

ui.upload( auto_upload=True, on_upload=lambda e: handle_upload(e) ).classes("max-w-full") async def handle_upload(e: events.UploadEventArguments): with tempfile.NamedTemporaryFile(delete=False, prefix="uns") as tmp: save_path = Path(tmp.name) await e.file.save(save_path) return save_pathBonus: FastAPI File upload

- python - How to Upload File using FastAPI? - Stack Overflow has many diff ways

- Building a Secure and Efficient File Upload API in FastAPI | Mahdi Abu Tafish is a deep dive

- blocking operations, security, etc.

TODO

Focus

element.run_method('focus')focuses the element, I could connect this to ui.keyboard | NiceGUI to do nice keyboard-centric focus of important fields!

Ref: element.run_method(‘focus’) not working · Issue #1092 · zauberzeug/nicegui (not documented elsewhere I could find)

Elsewhere

Validating in real time based on a pydantic BaseModel!: Real-Time Form Validation in Python Using Pydantic | by Aman Deep | Medium

Async hell

Using a variable globally defined outside a function creates issues. E.g. globally WHAT=‘ever’ and then inside a function. TODO better description.

-

-

Day 2646 (31 Mar 2026)

Translating pattern with LLMs

I know enough German to judge but not enough to write grammatically myself, and this is a pattern I don’t want to forget.

This is SO MUCH BETTER than anything else I’ve used for this! For very short snippets it’s golden

-

Day 2645 (30 Mar 2026)

LaTeX paper checklist

For the main file, see: 240116-1701 LaTeX best practices

Camera-ready checklist

- Enable reviewing mode in Overleaf

- Change package options

[review/final]etc. - Authors emails, names, order correct?

- Acknowledgements present and uncommented?

- Anonimity: uncensor names+URIs

- Are acknowledgements, references, appendices a) allowed, b) part of page limit?

- COMPRESSION: reconsider all hacks if you got more space for the camera-ready version

\setlength{\itemsep}{0pt} \setlength{\parskip}{0pt}and friends\setlength{\belowcaptionskip}{0}\setlength{\abovecaptionskip}{10pt}1 <- default values\looseness-1- Any paragraphs to un-merge for readability?

- Go through pre-flight checklist below

- Manually check ALL review-mode changes at the end!

Pre-flight checklist

- Ask LLMs to proofread! THEN do the steps below.

- Acknowledgements, references, appendices: a) allowed, b) count towards page limit?

- In which order?

- Anonymity: acknowledgements, again!

- Search for

TODOs in the text! - Consistency:

- When to use single/double quotes (quotes), when emphasis (foreign languages?)

- terminology?

- (including in captions and inside figures (labels, titles!))

- Table headers’ variables and names are easy to forget to update!

- Tables

- Did any footnote markers get deleted when pasting new data in tables?

- Adding

\bottomruleshelps if distance between table and caption too small

- Titles

- Title Case where needed (paper title + sections)

- Break the paper title in some pretty way

\mboxto avoid breaks,\-within word to mark break words

- Citations+references

- before periods and footnotes

- always w/ NBSP

- Double-check each reference, including inside table/figure captions!

- citep=cite, citet if subject, citealp in parentheses

- Don’t forget multiple citations

exist [1,3]!

- Commands/macros

- Search for

\TODOand any custom commands/macros you created - Check the spacing after any custom macros

- Search for

- Typography bits

- 231206-1501 Hyphens vs dashes vs en-dash em-dash minus etc

- Smart Quotes: Either `x’ and ``x’’ or

\enquote{}everywhere - Large Numbers: Write large numbers as 54{,}000.99

~is\textasciitilde: check any estimate numbers (~50%)!%is\%; check all percentages!\is\textbackslash- underscores, subscripts, superscripts are dangerous

- Avoid footnotes after numbers

- does minted caption use the same font as the rest?

-,--,---are the safest — look and remove pasted UTF8 ones

- NBSP (search and manually check each!)

- Citations,

\references (Figure~\ref{fig:somefig})- Actually

\autoref!

- Actually

- TODO

- Citations,

- Look at logs!

Things that can go wrong with content

- Read through all analysis and tables, make sure the data makes sense, the analysis/results match the numbers in the tables, the plots and the tables match to each other.

- Make sure whatever you used to generate plots and tables used the exact same data!