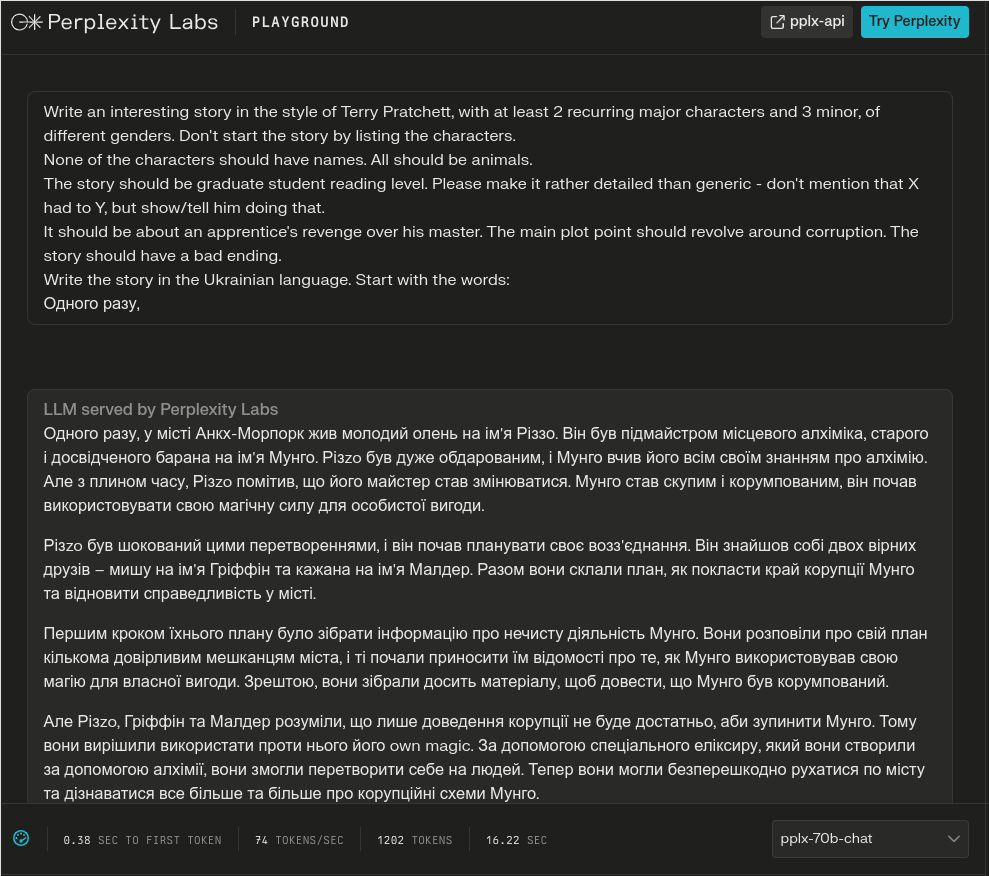

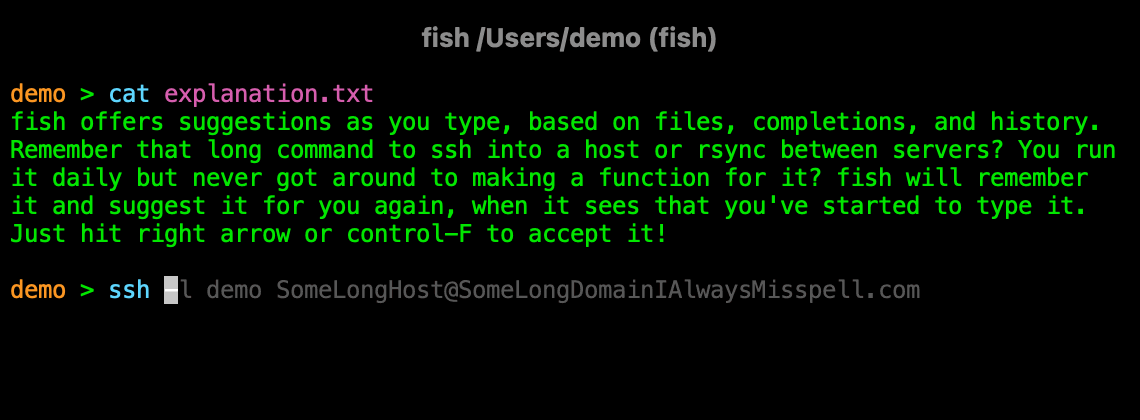

bttf for CLI time parsing and interesting help pattern

BurntSushi/bttf: A command line tool for datetime arithmetic, parsing, formatting and more.

I use wolframalpha for most of my casual date ops etc. but the project is cool and I may need it.

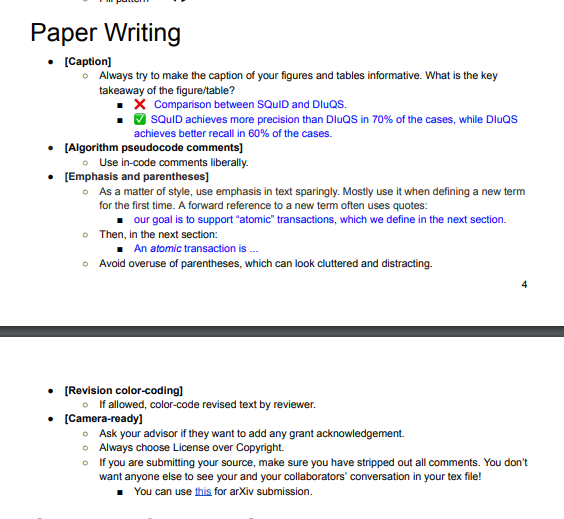

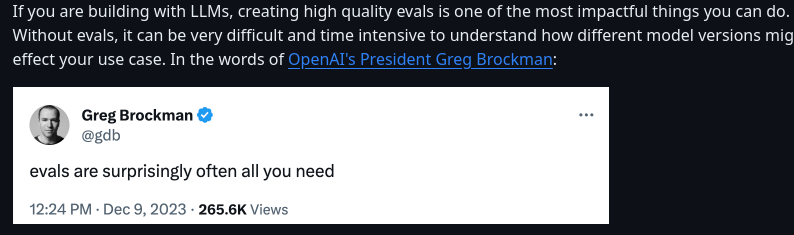

But the most interesting bit is the documentation.

Quoting README:

I may ship arbitrary and capricious breaking changes at this point. You have been warned. […] And it doesn’t give a hoot about POSIX (other than the

TZenvironment variable).

And THIS. I either love it or hate it, can’t decide:

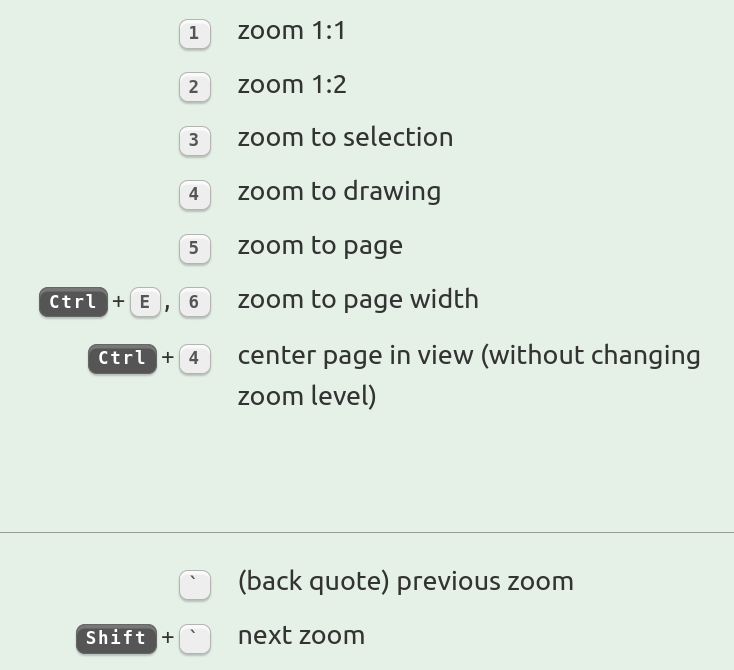

-h/--help

This flag prints the help output for bttf.

Unlike most other flags, the behavior of the short flag, -h, and the

long flag, --help, is different. The short flag will show a condensed

help output while the long flag will show a verbose help output.

It breaks my usual expectations but damn it’s a cool pattern that I really want to be a thing! You’re allowed to break conventions if your thing is really smarter and you’re explicit and intentional about it.

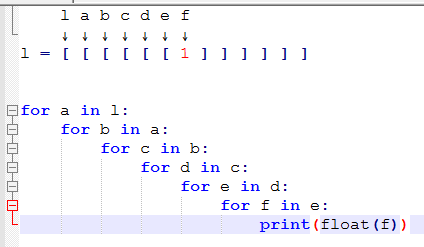

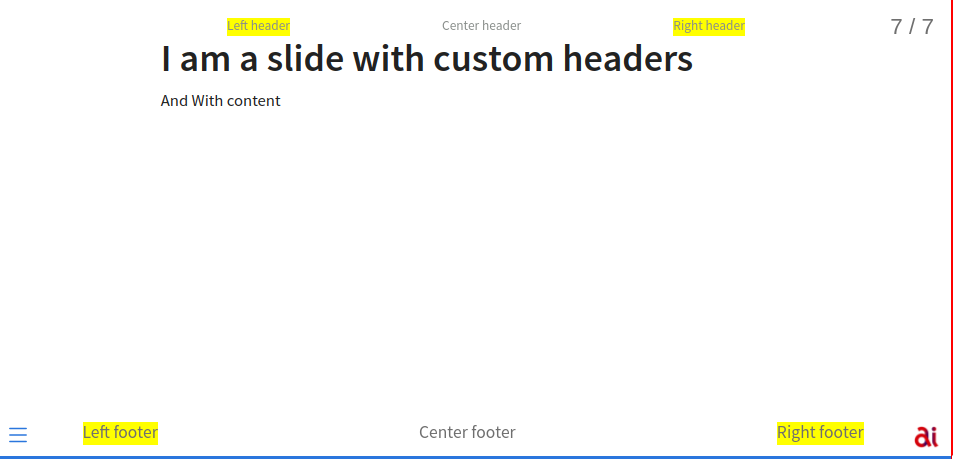

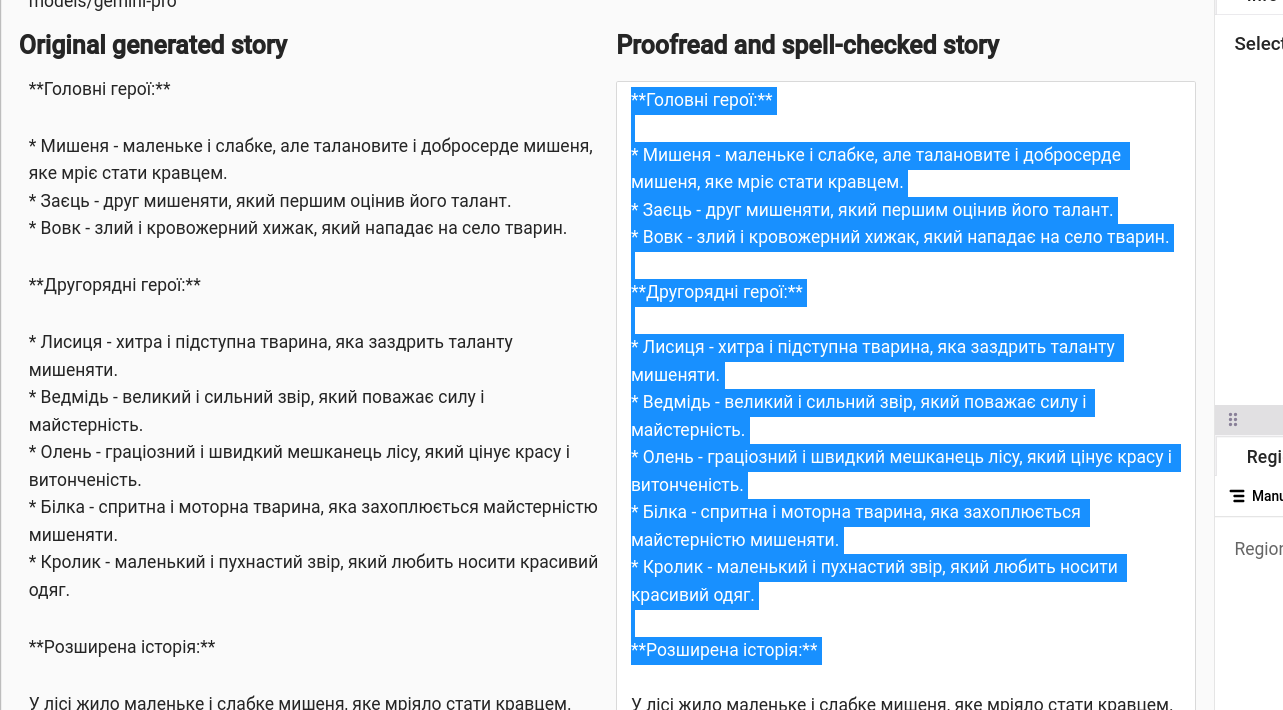

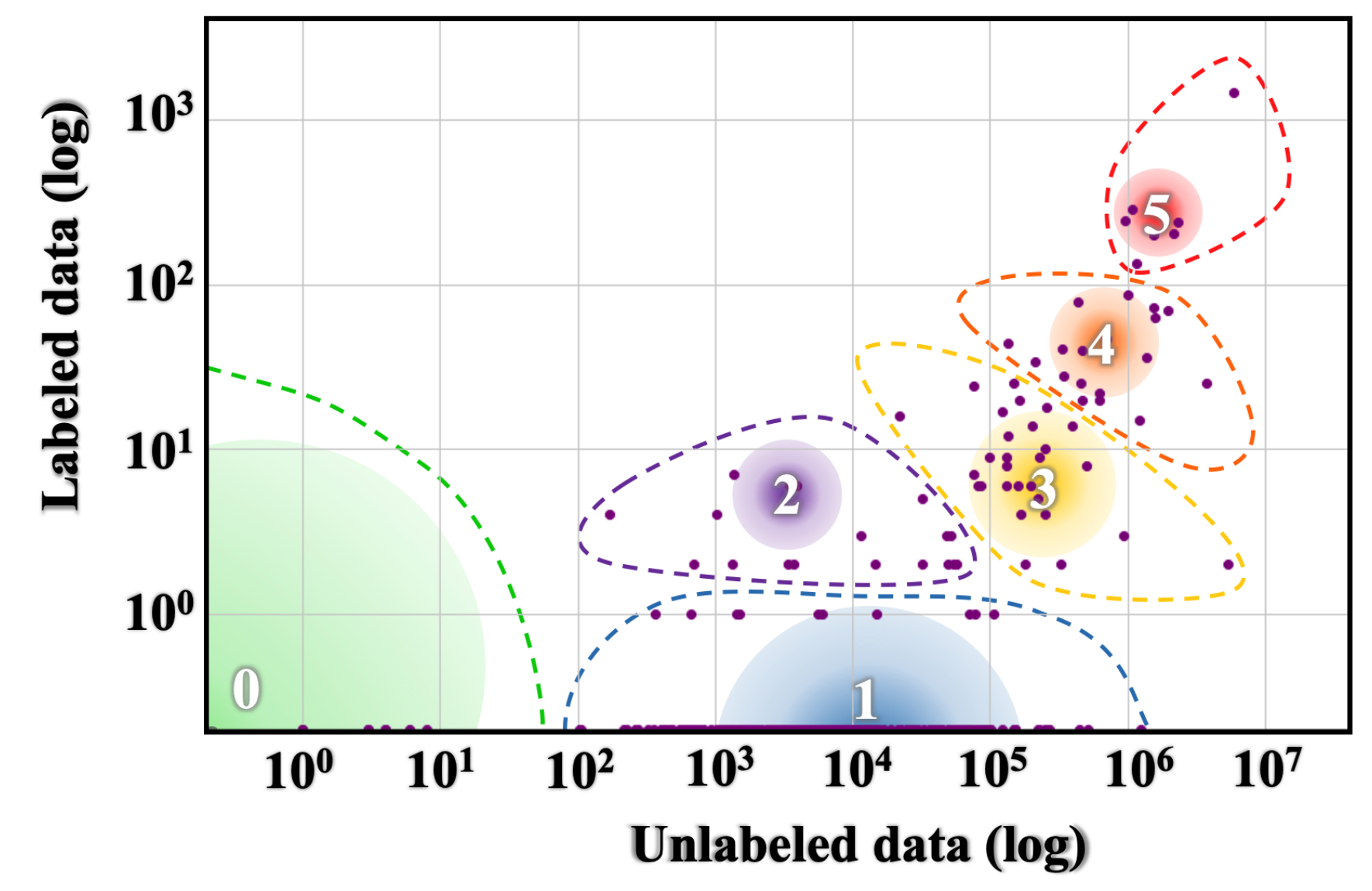

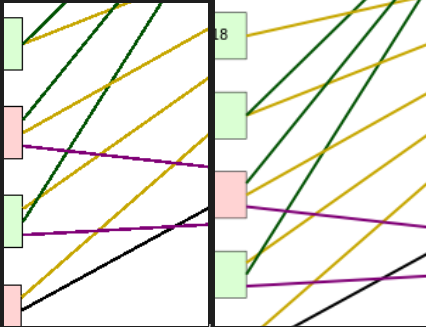

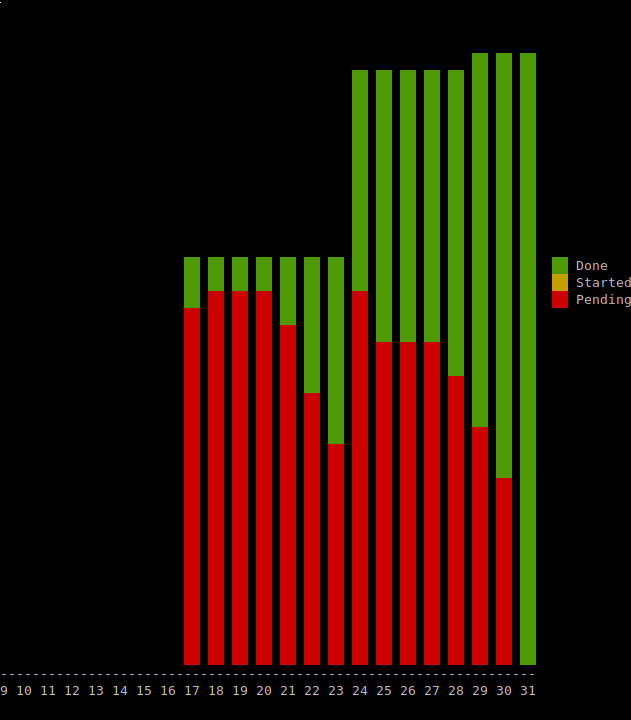

(Preserving l/r width)

(Preserving l/r width)

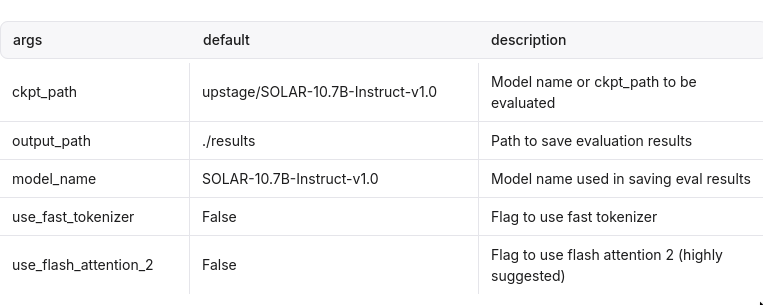

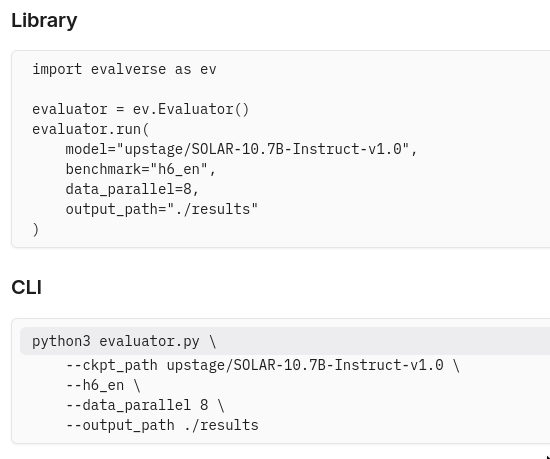

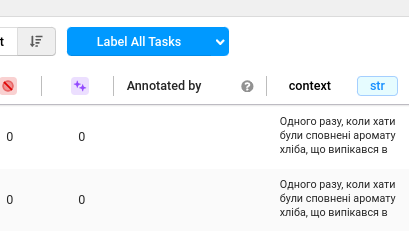

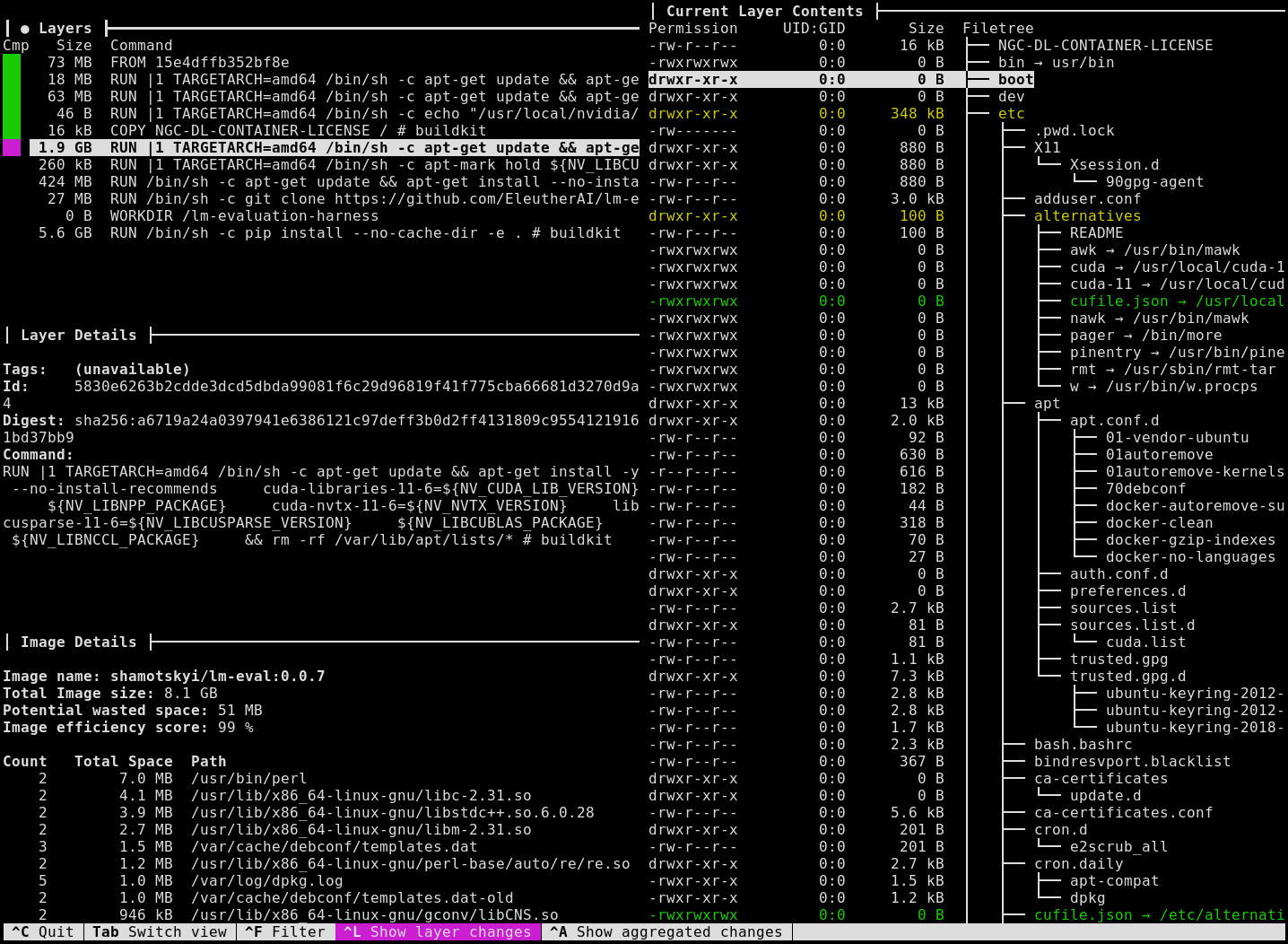

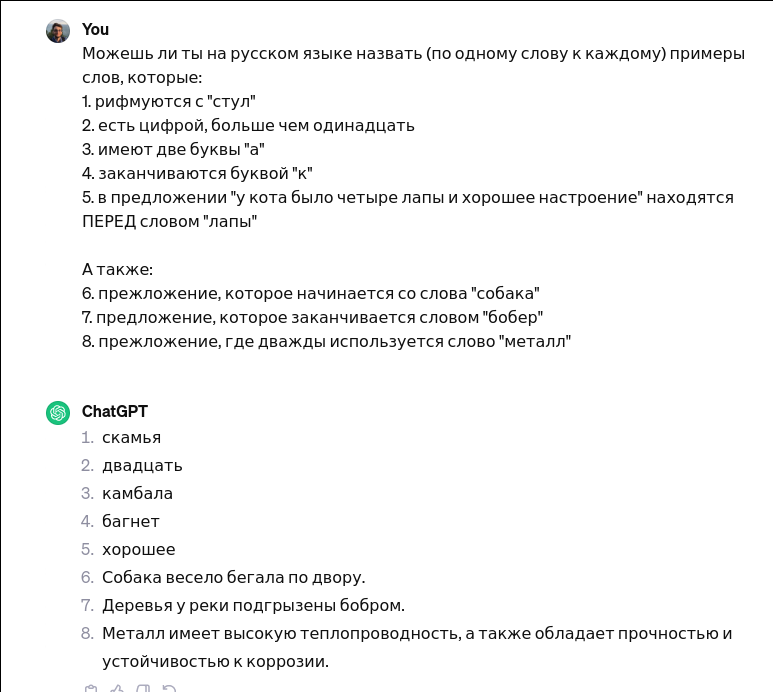

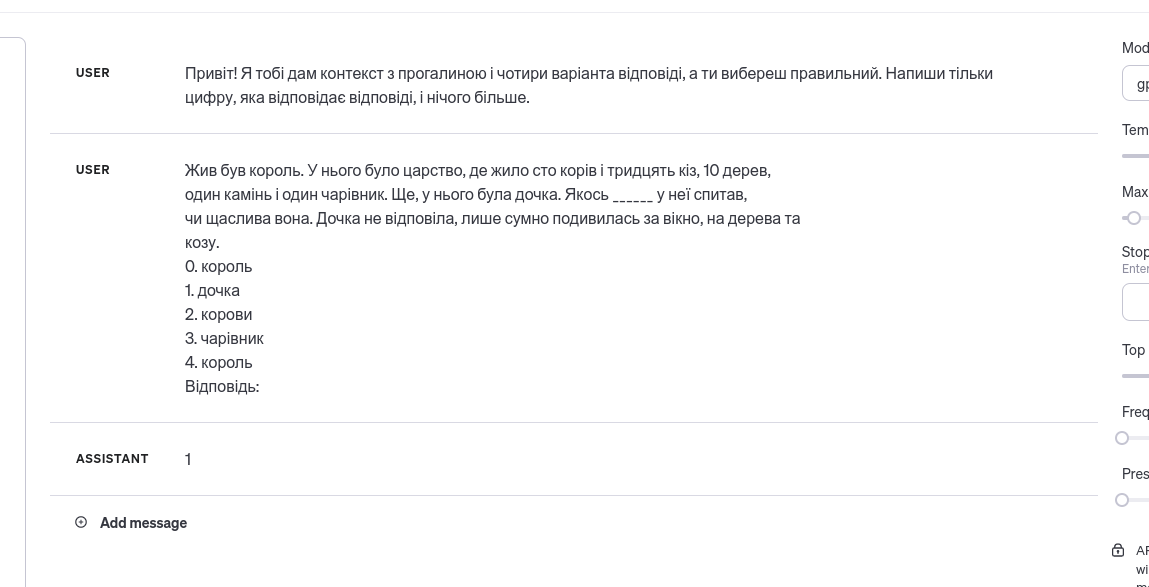

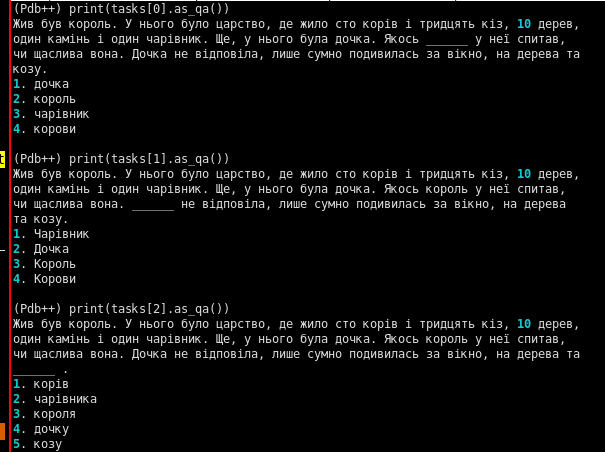

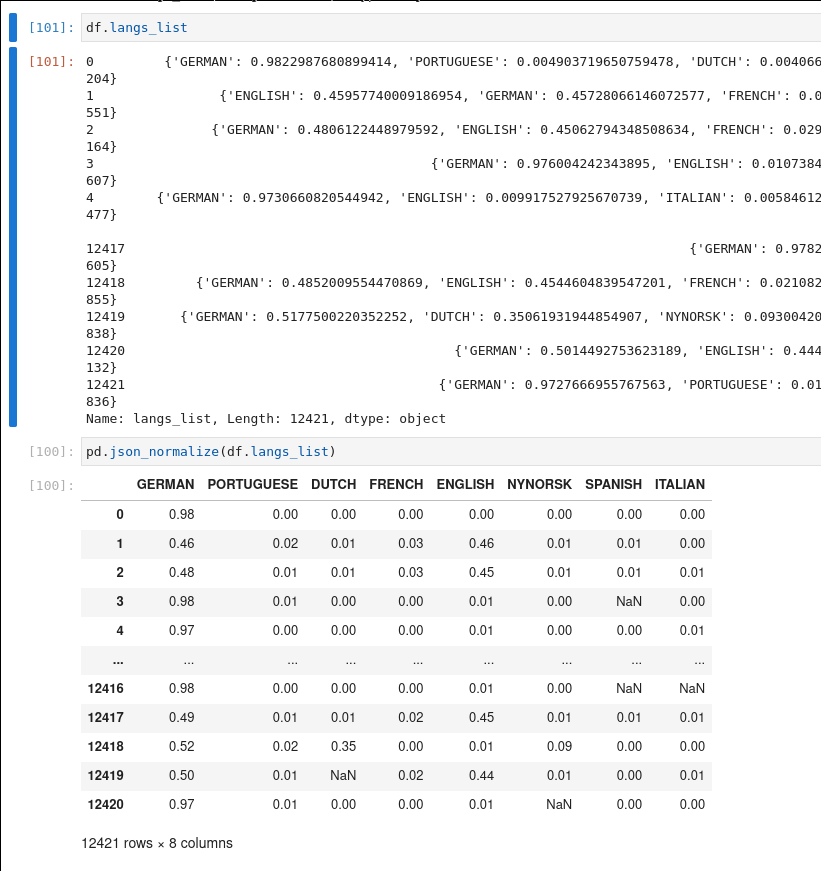

(h6_en is lm-eval)

(h6_en is lm-eval)

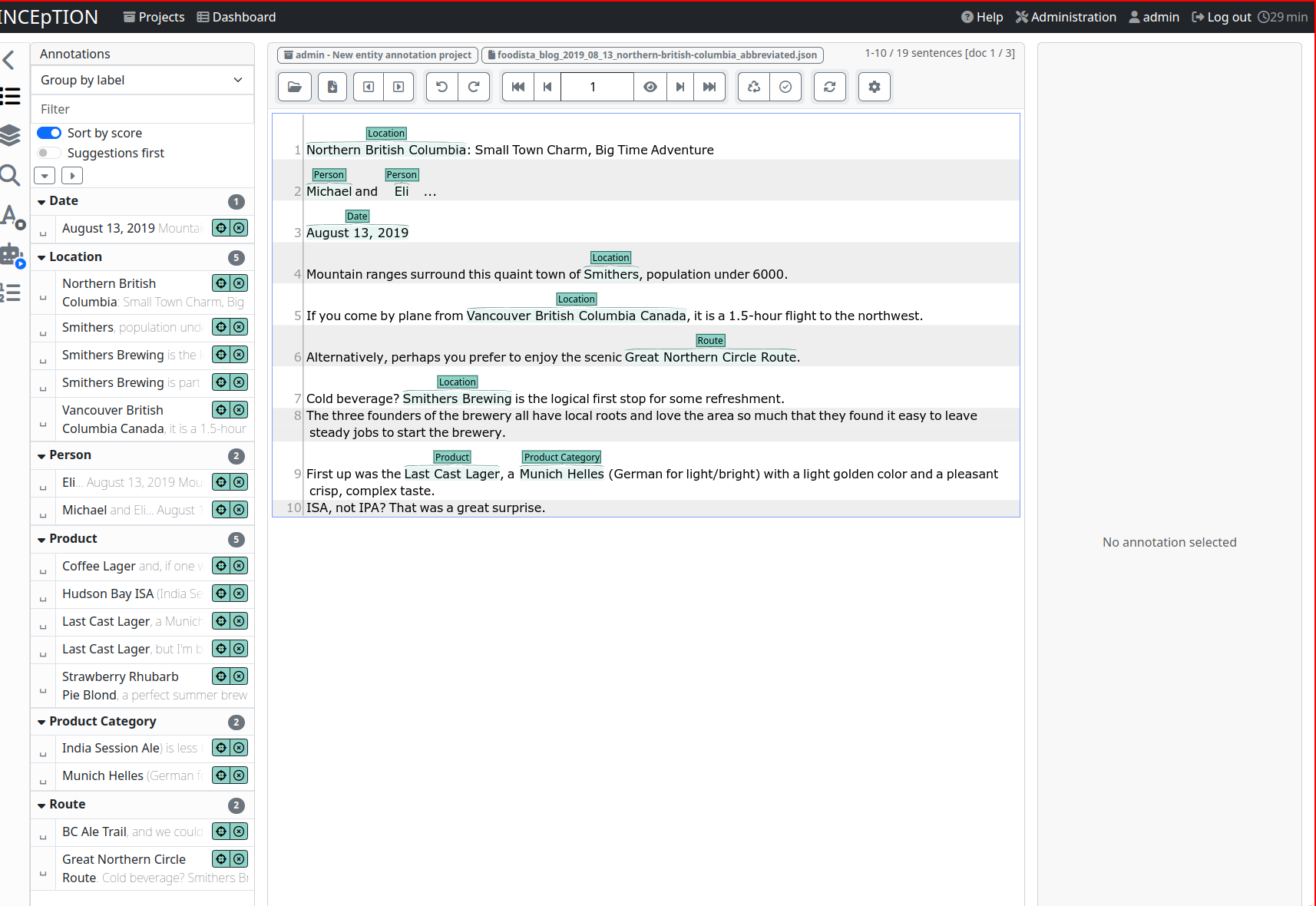

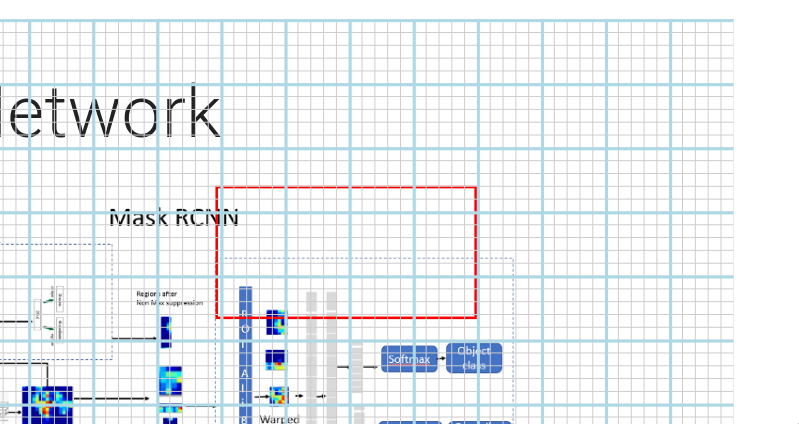

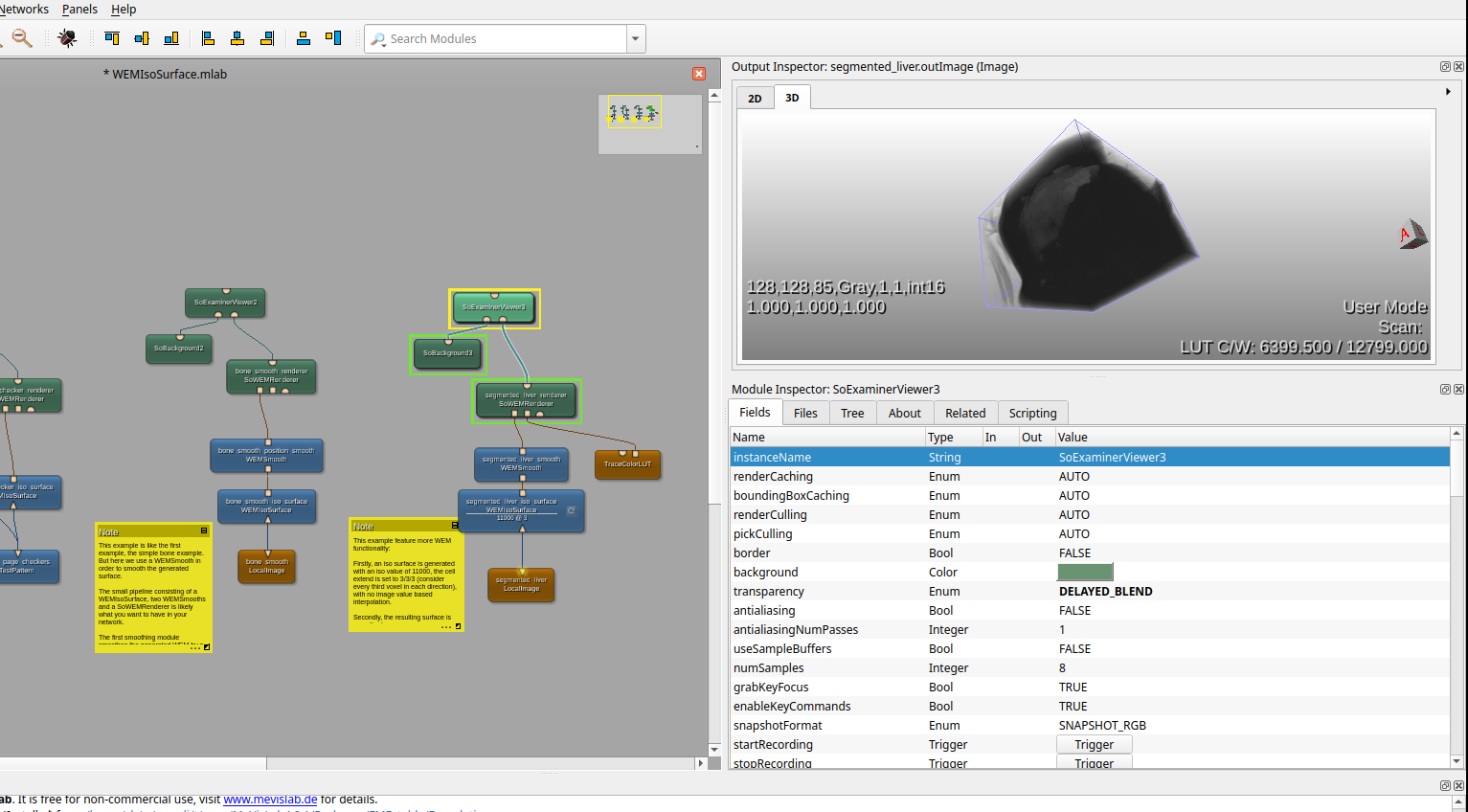

and adding blocks — much less chaotic than 3d slicer (at first glance)

and adding blocks — much less chaotic than 3d slicer (at first glance)

(EDIT: oh damn it’s 7, not 6!)

(EDIT: oh damn it’s 7, not 6!)

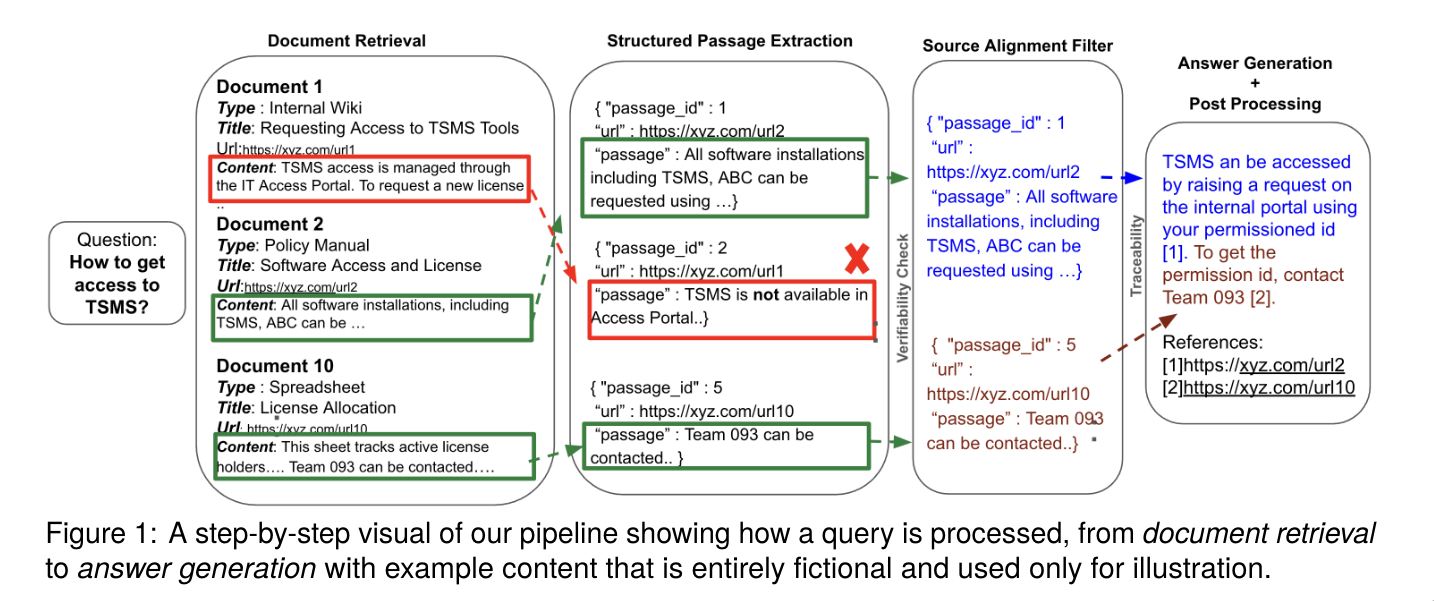

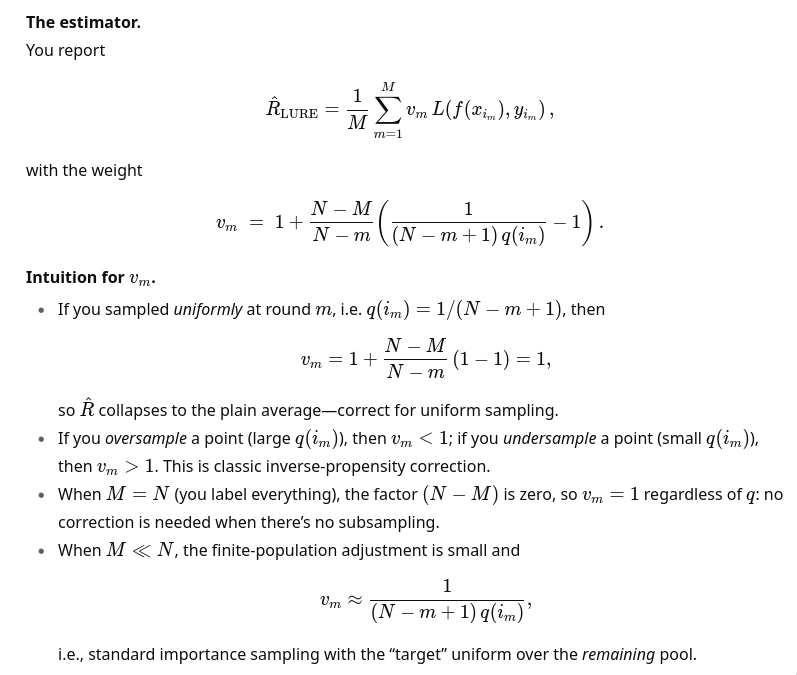

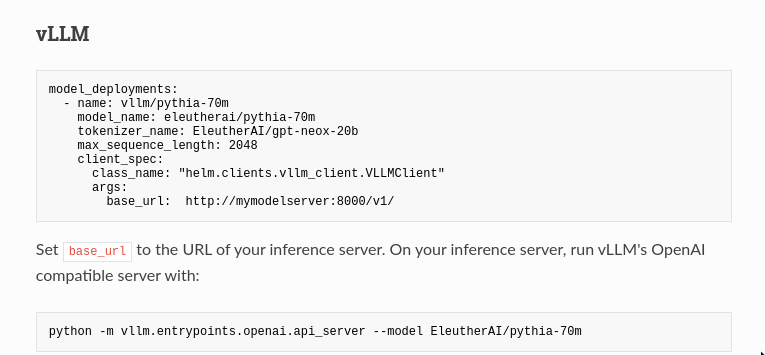

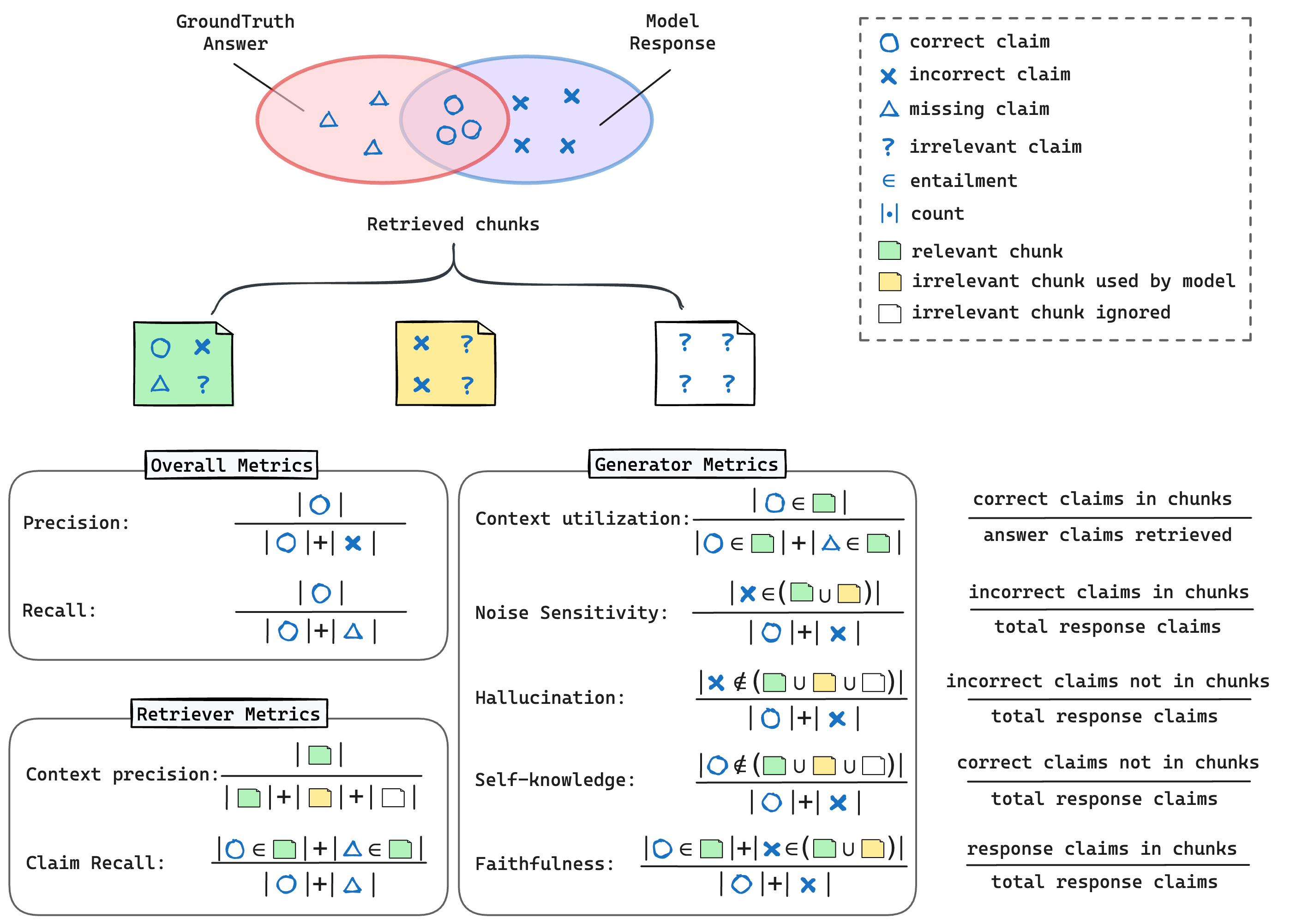

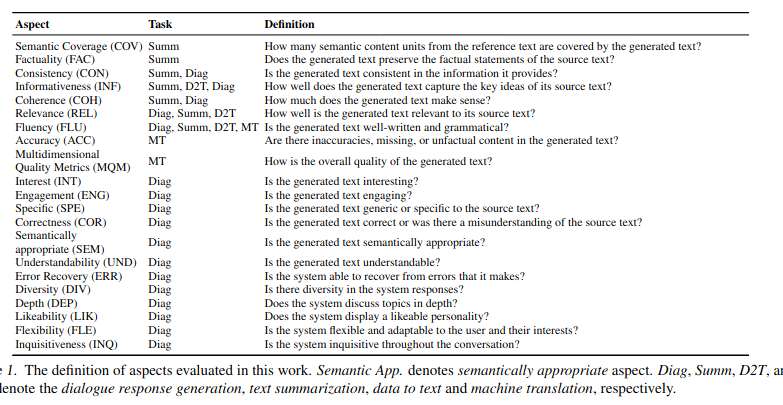

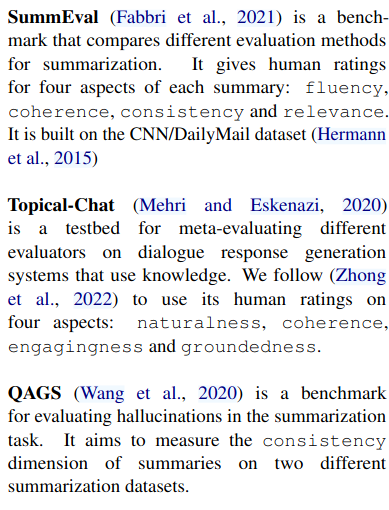

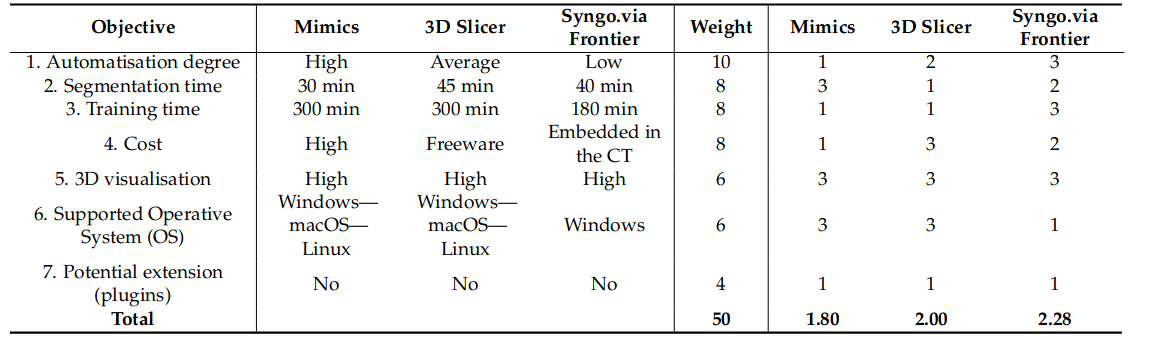

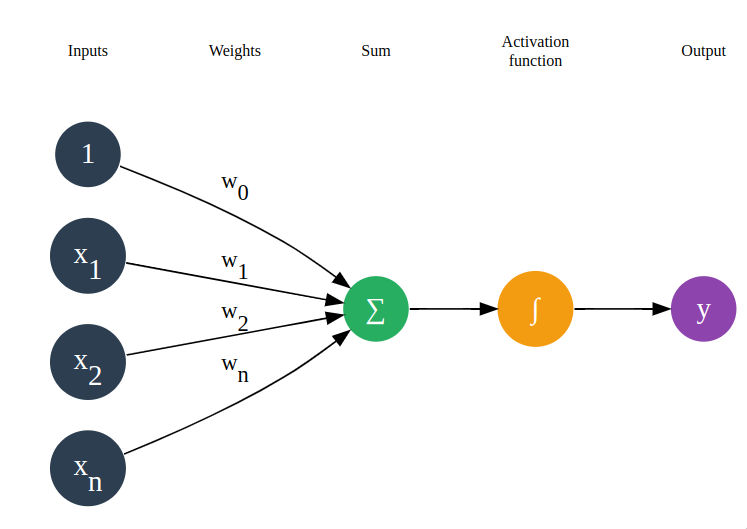

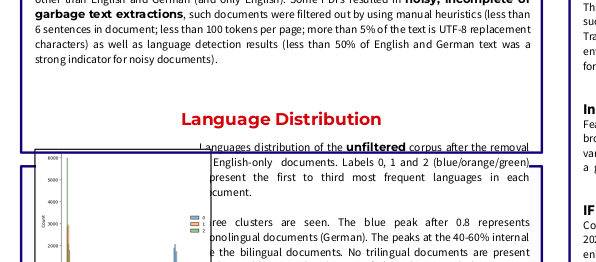

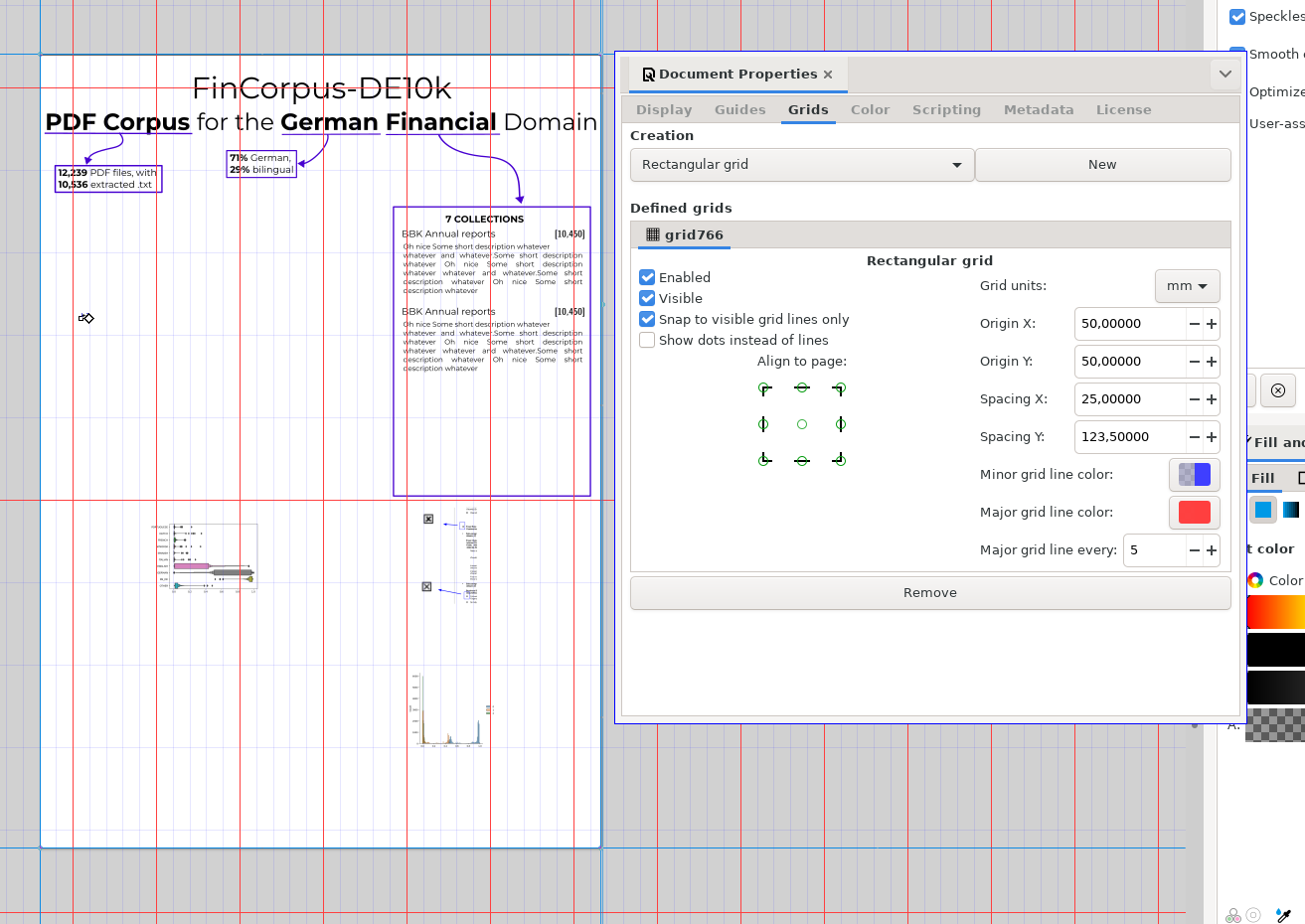

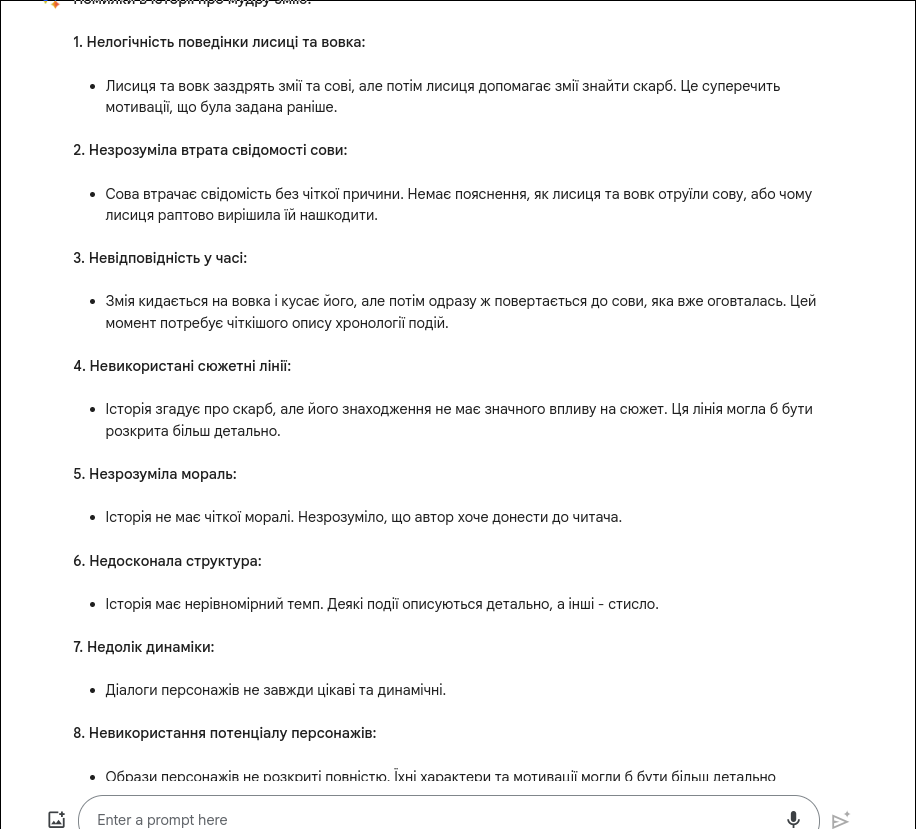

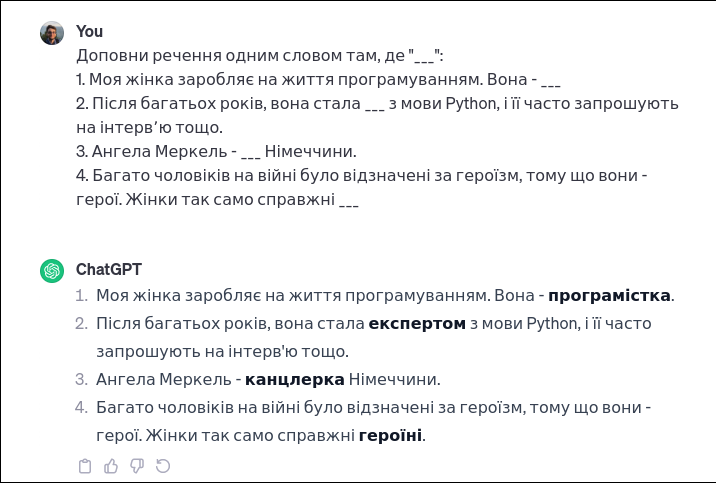

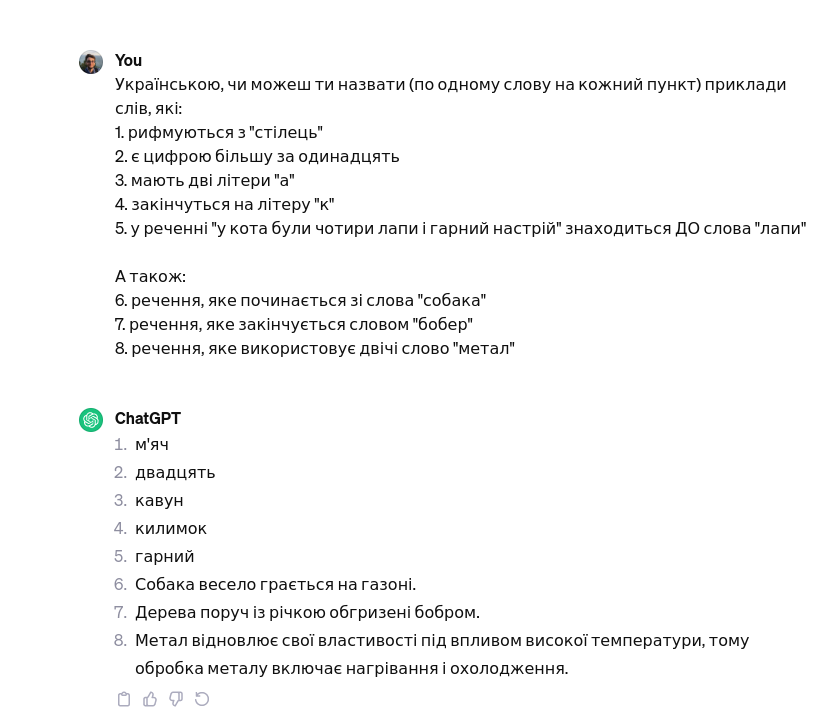

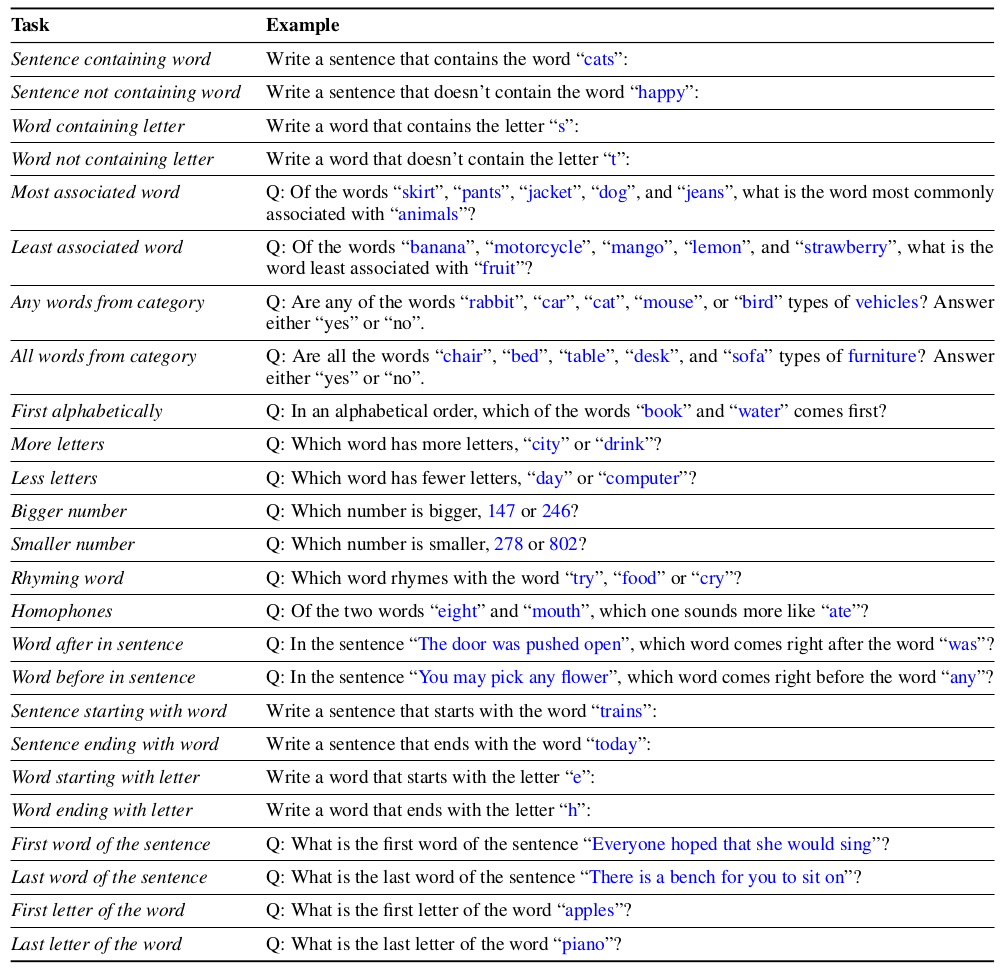

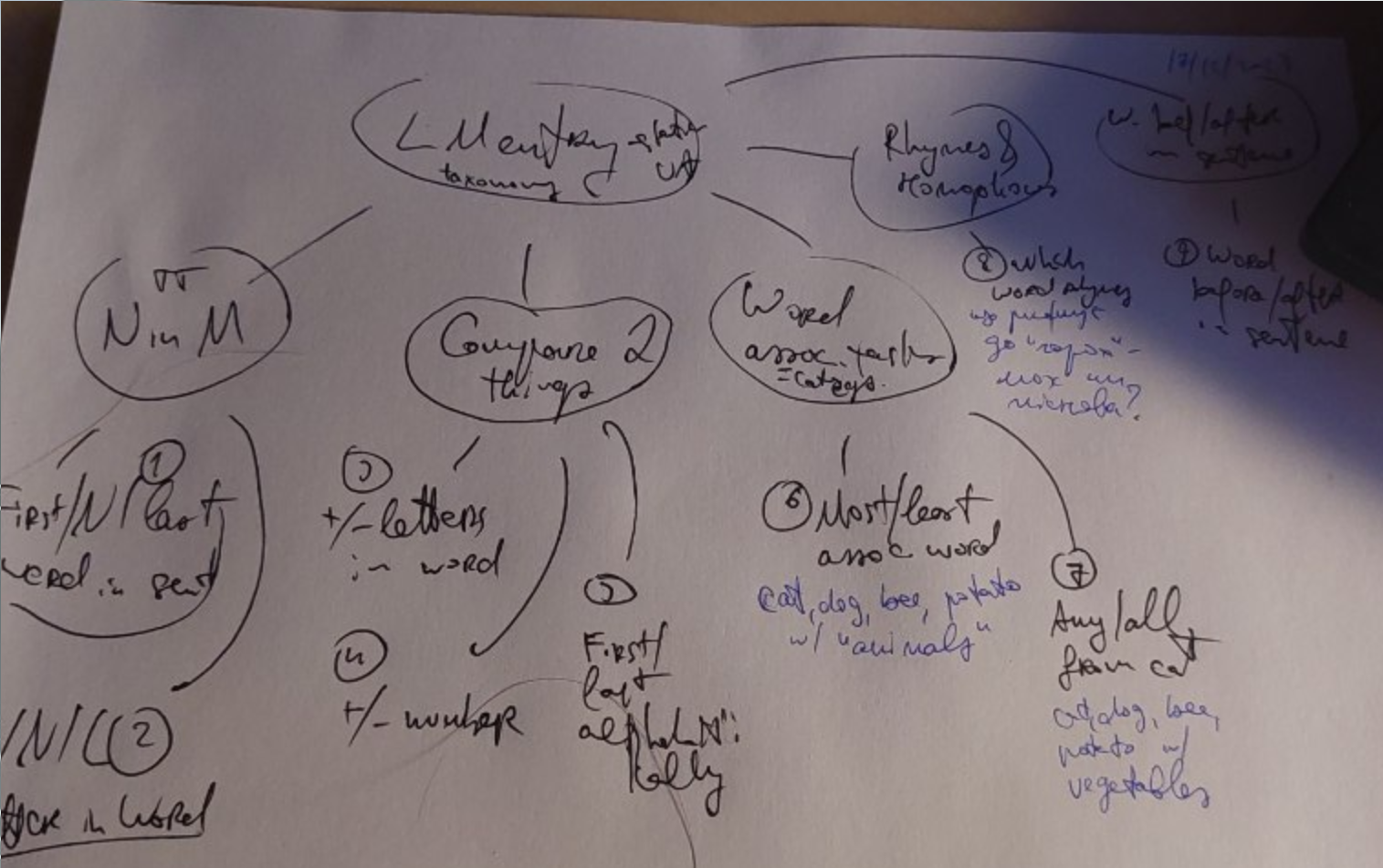

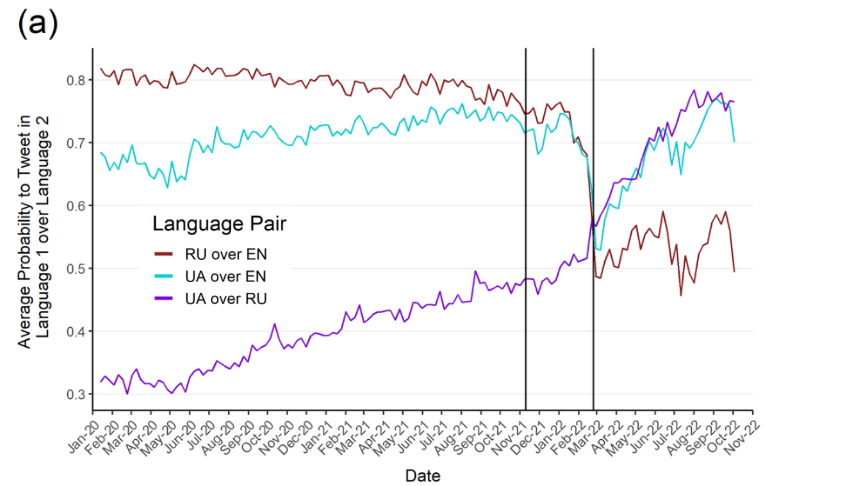

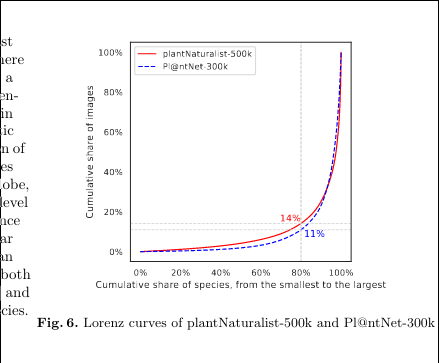

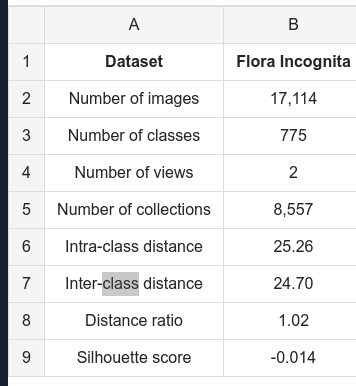

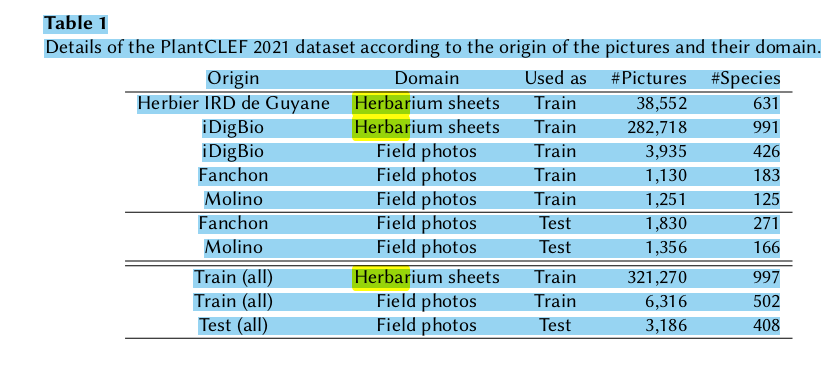

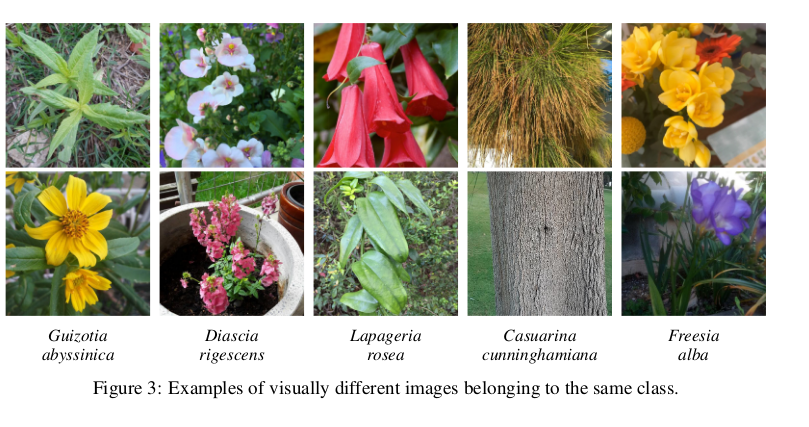

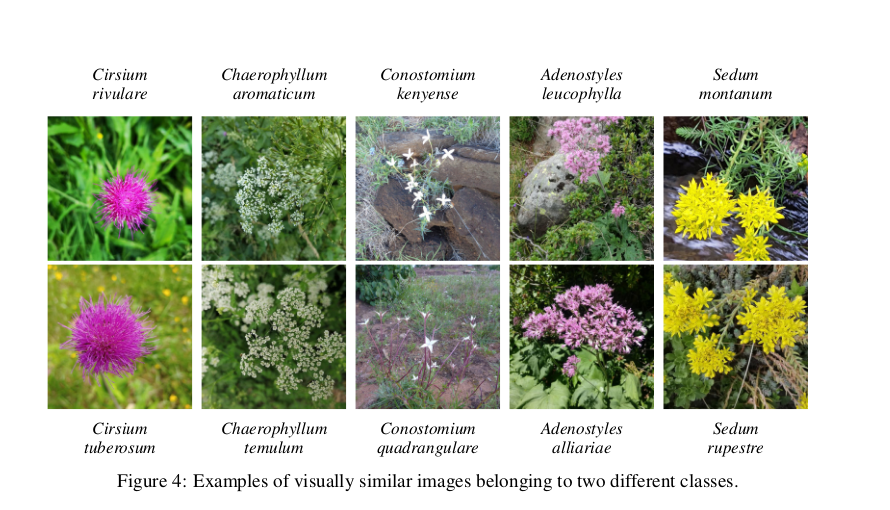

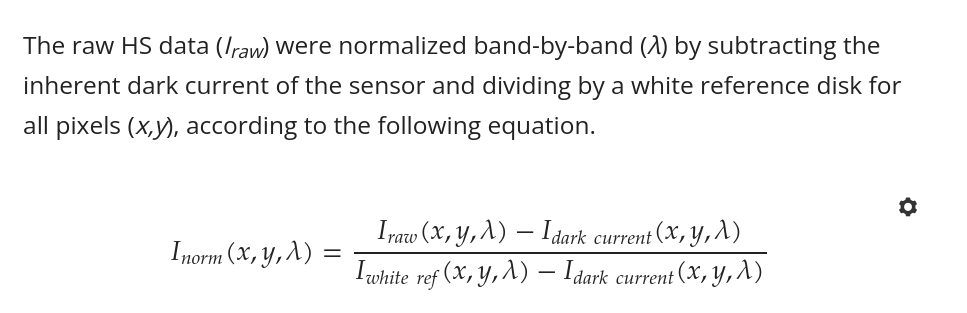

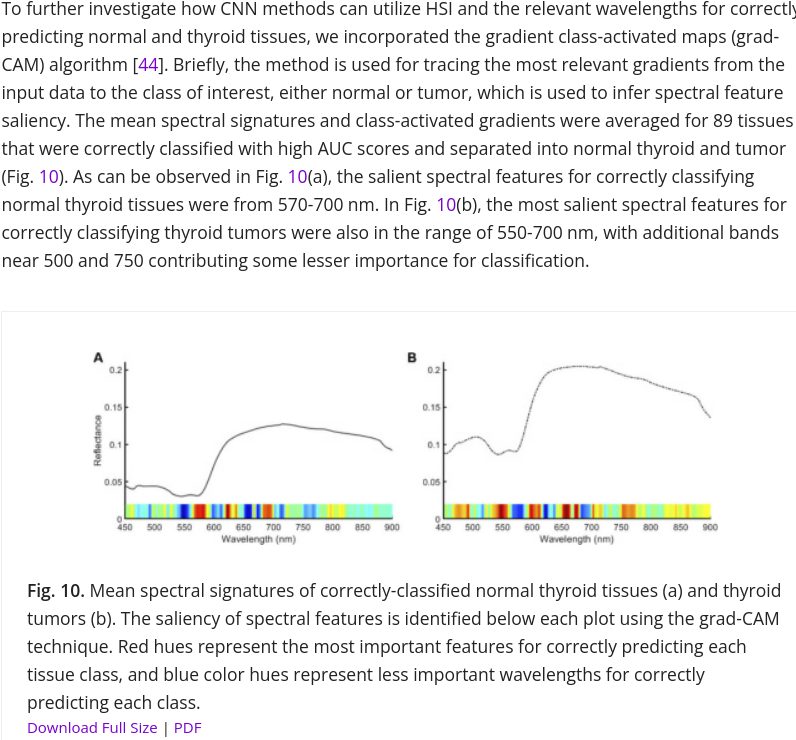

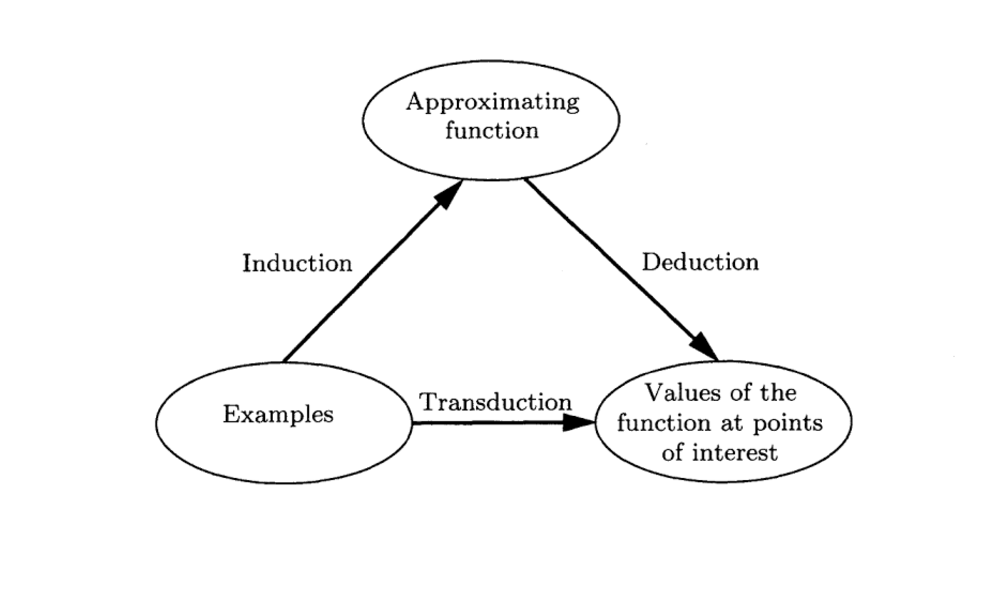

from the paper

from the paper  +

+

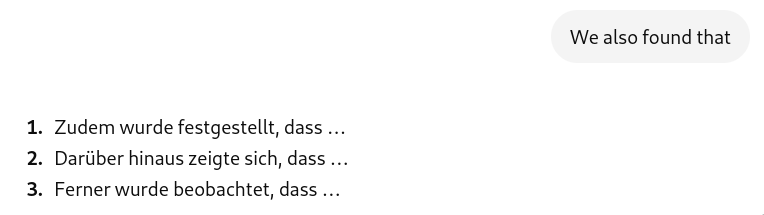

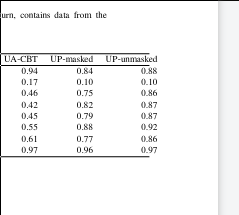

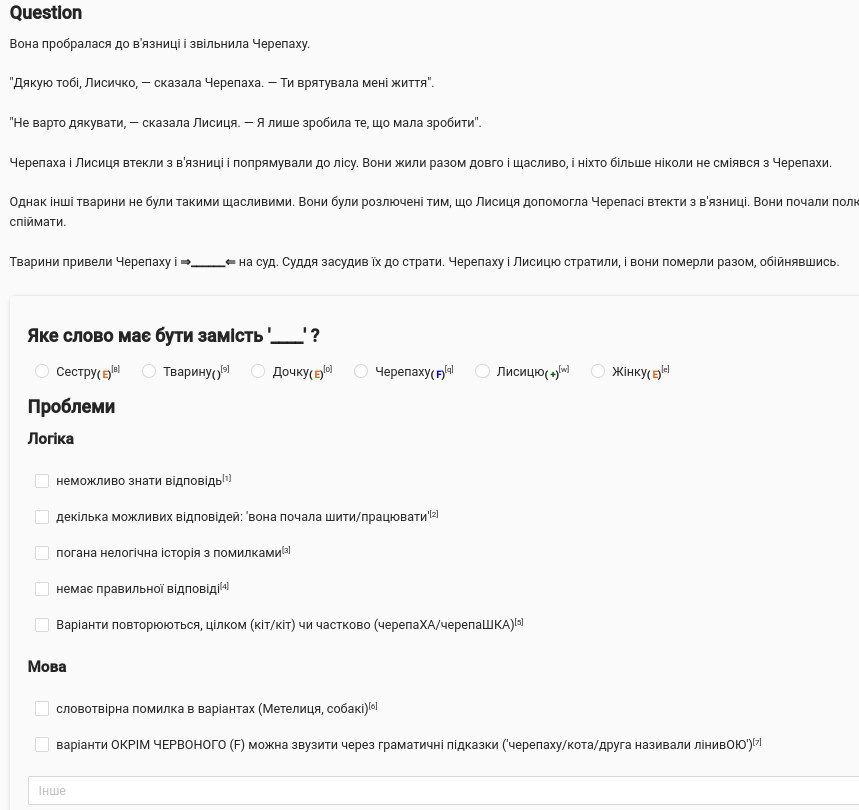

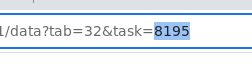

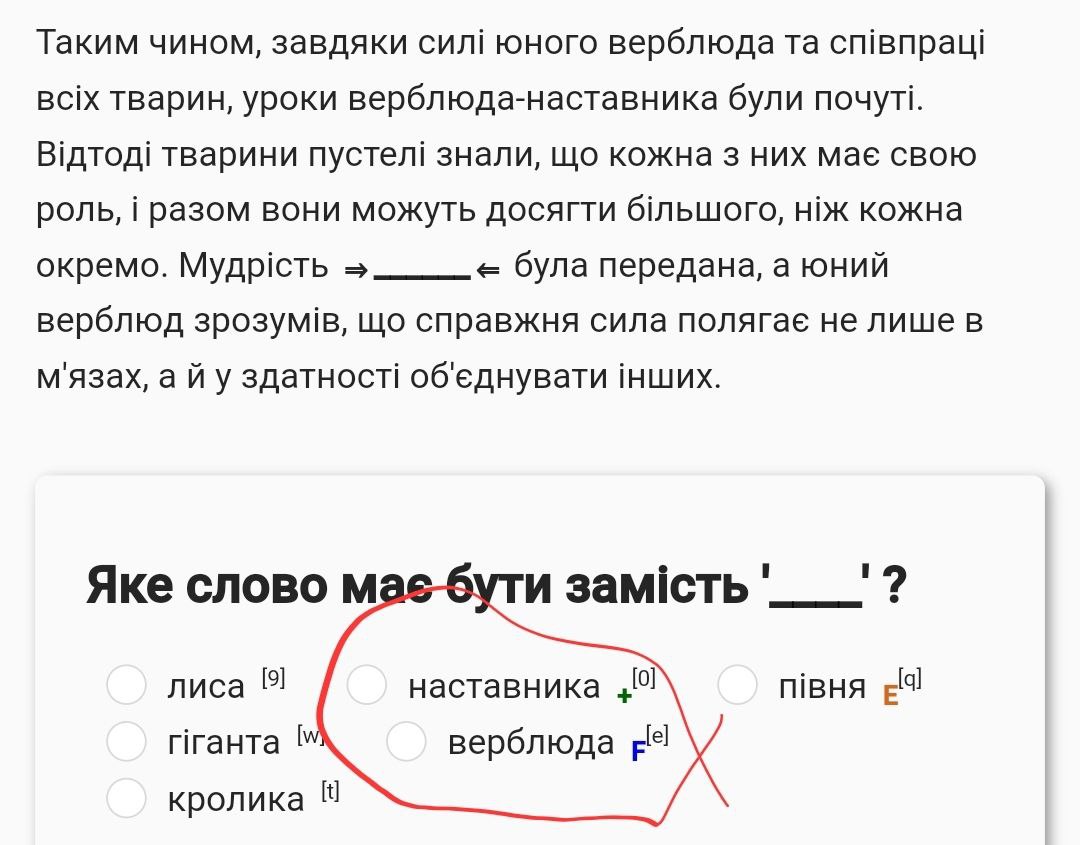

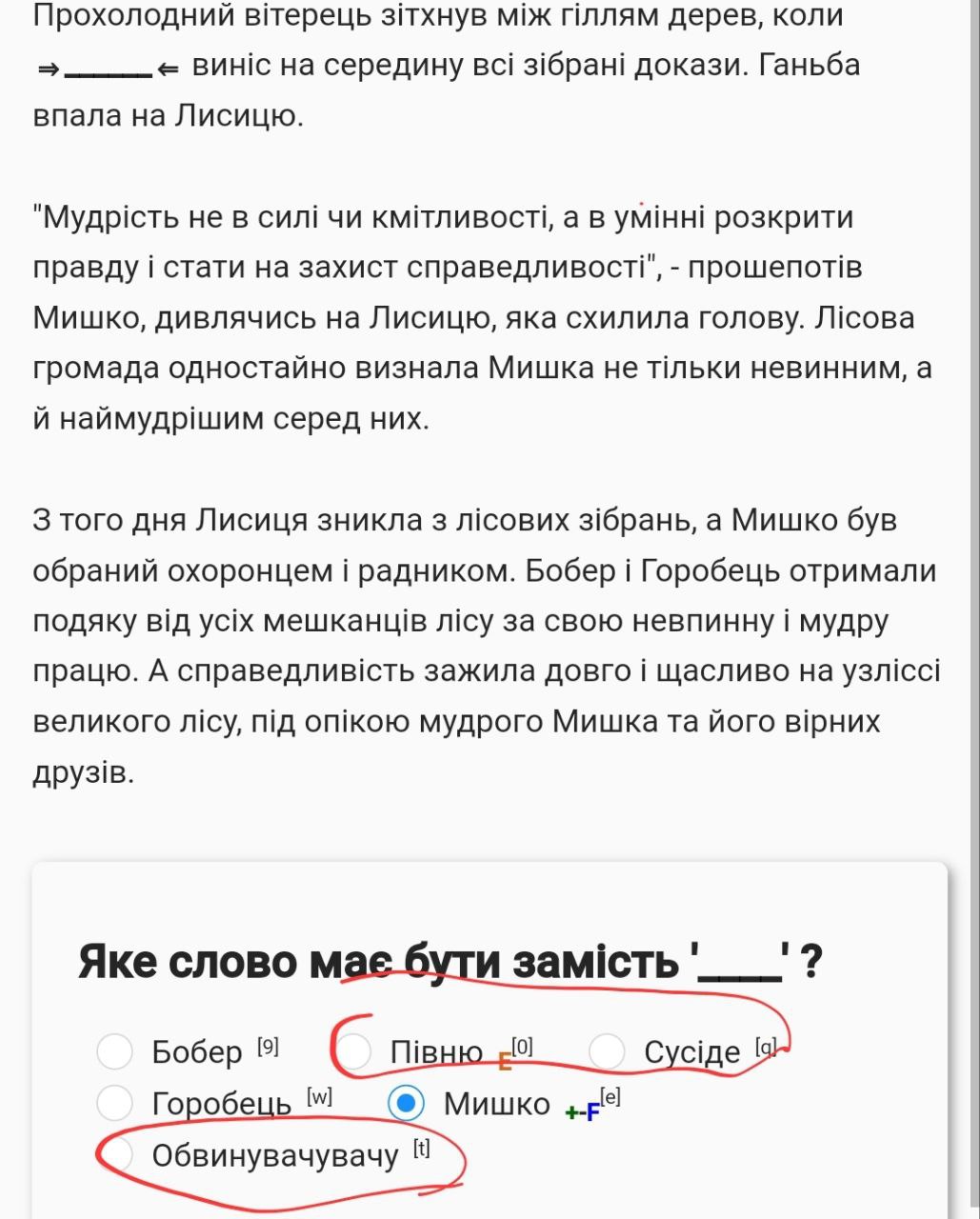

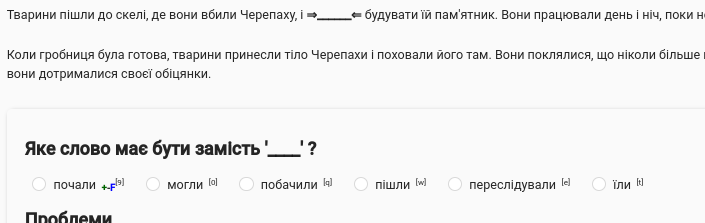

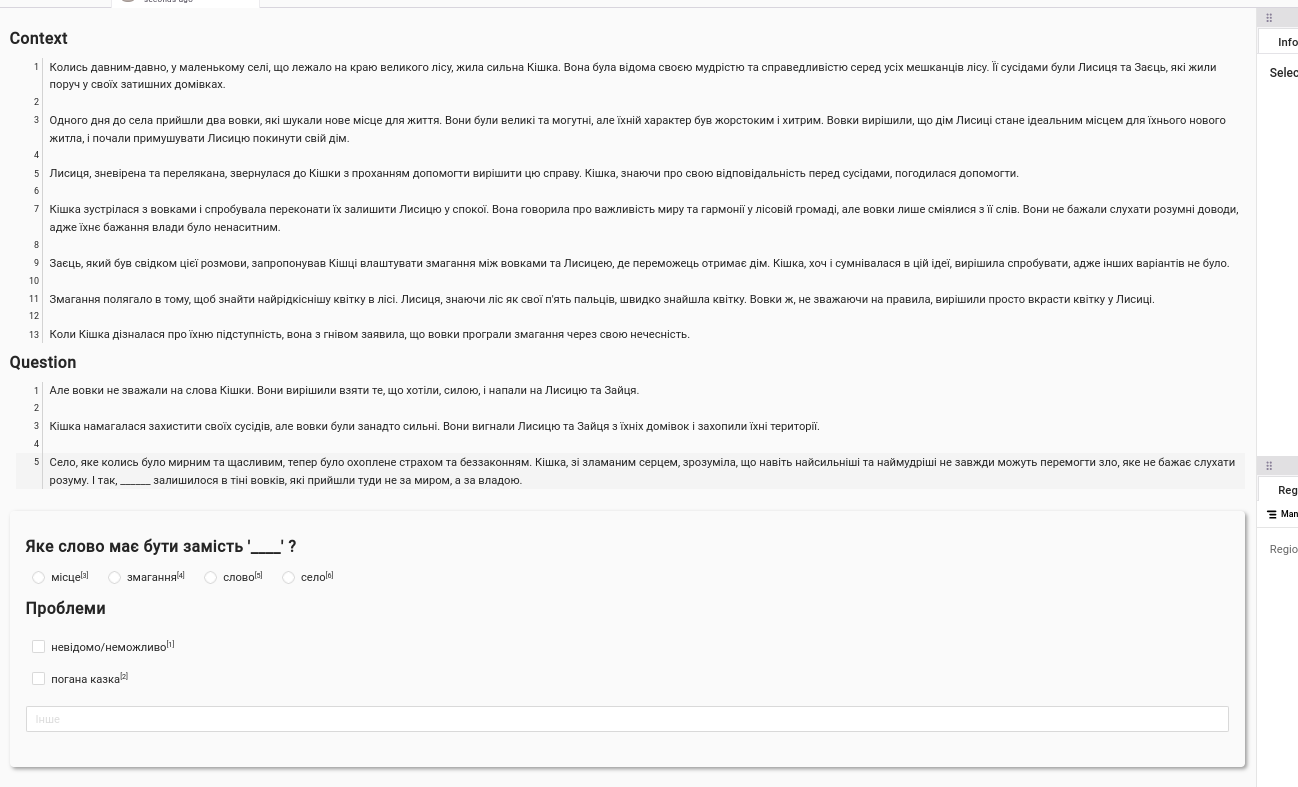

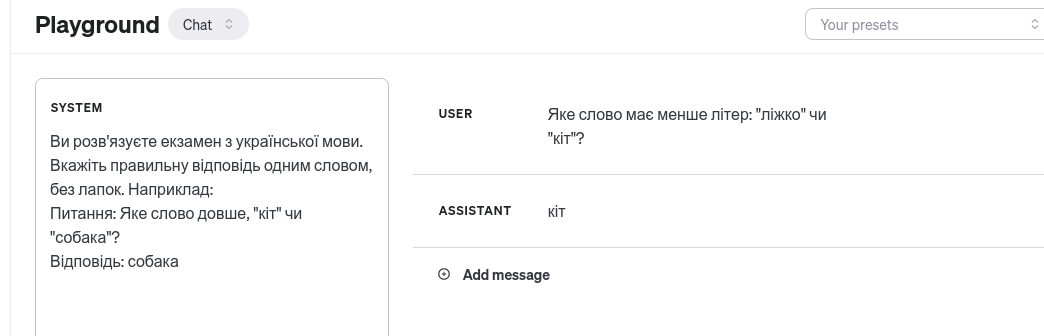

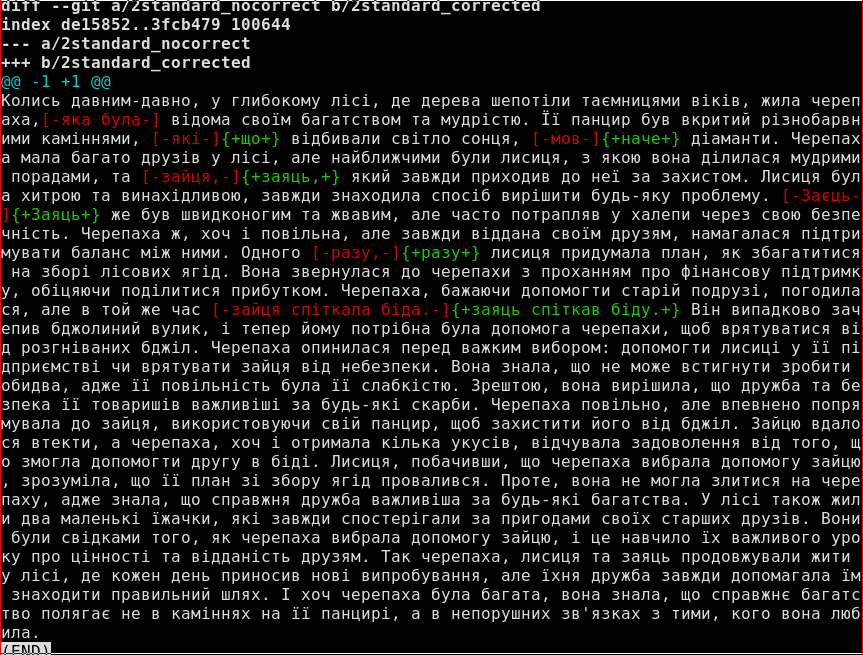

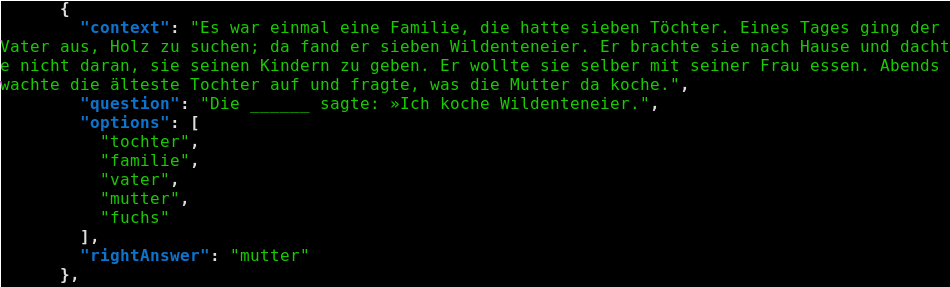

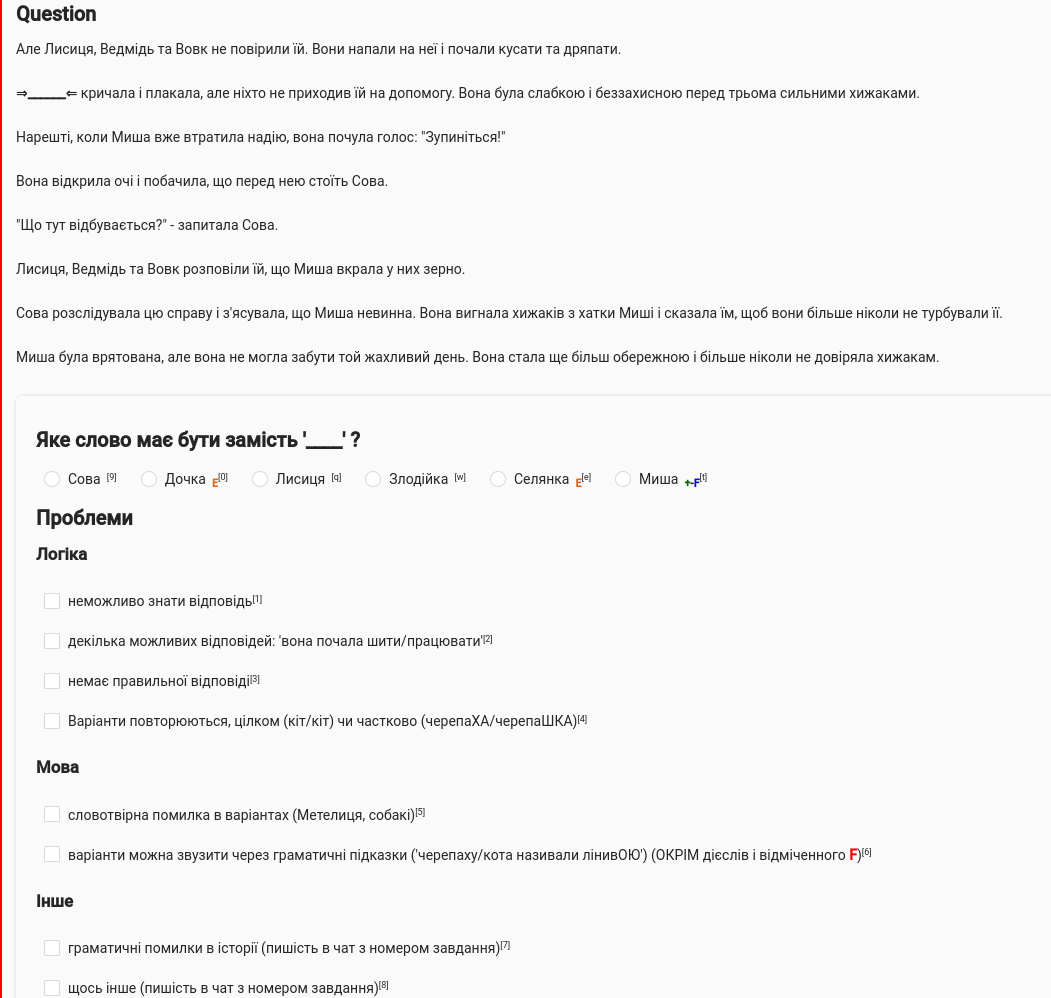

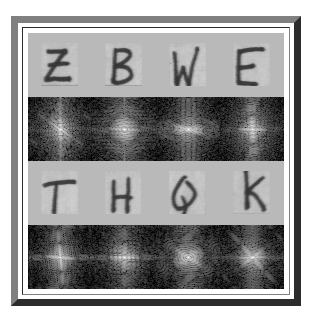

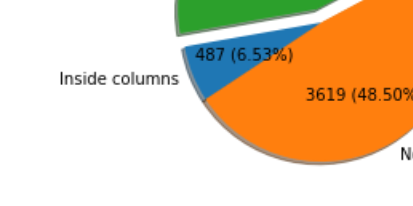

- … leading to a probability not of 1/4(..10) but 1/2

- one way to filter out such bad examples is to get a LM to solve the task without providing context, or even better - look at the distribution of probabilities over the answers and see if some are MUCH more likely than the others

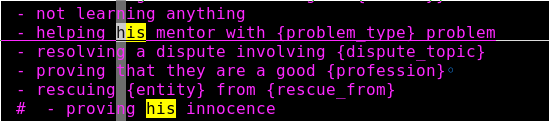

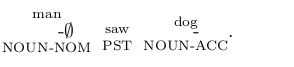

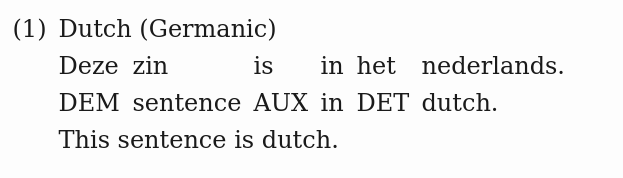

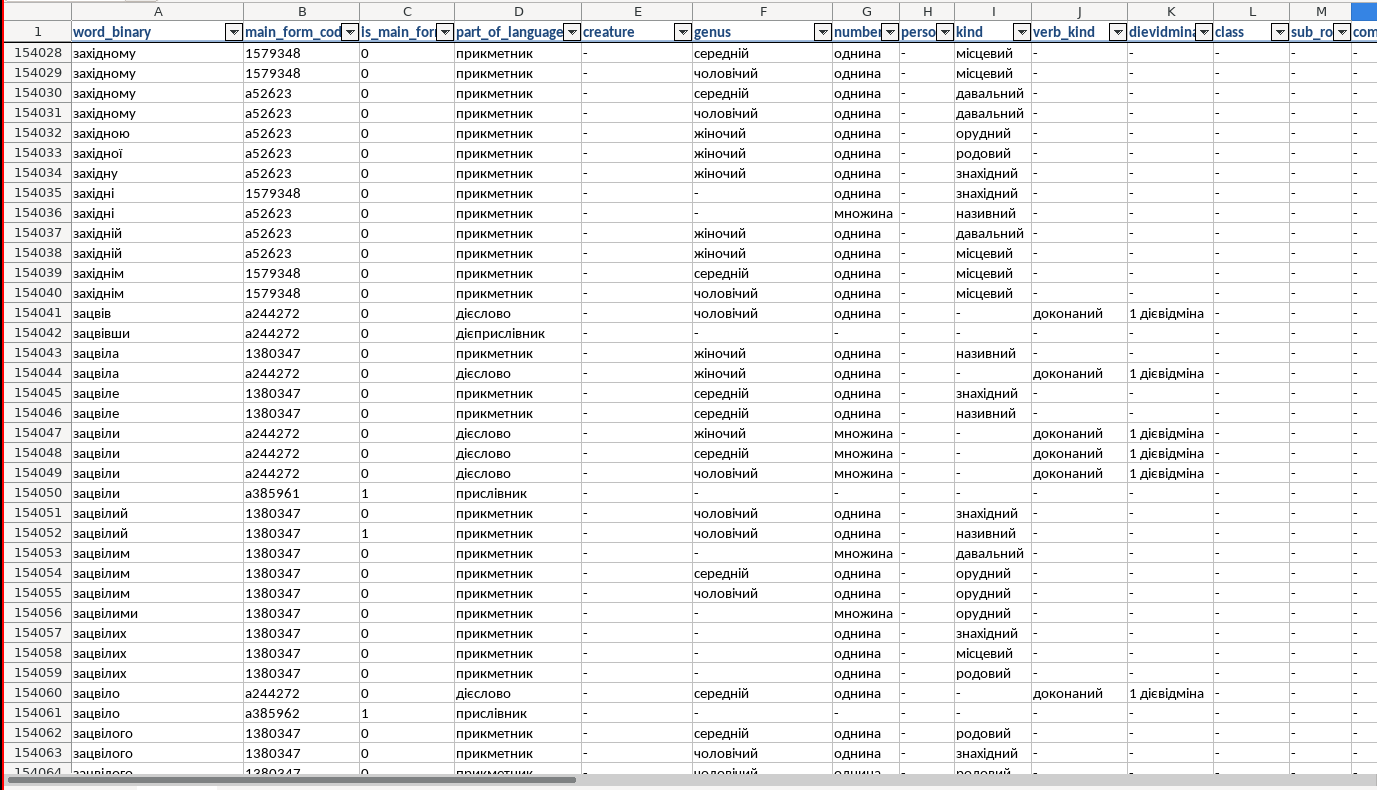

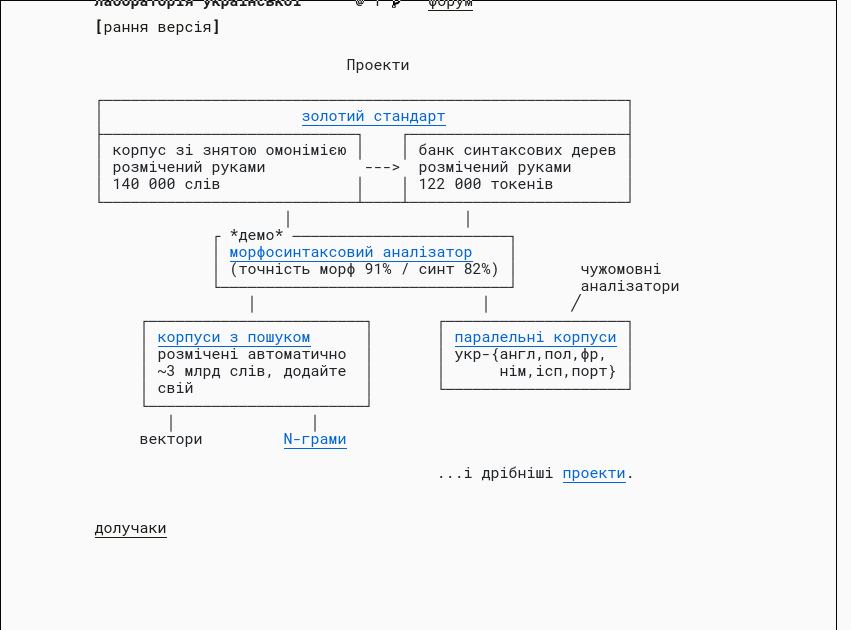

- Issue with 2-3-4 plurals: I can just create three classes of nouns, singular, 2-3-4, and >=5

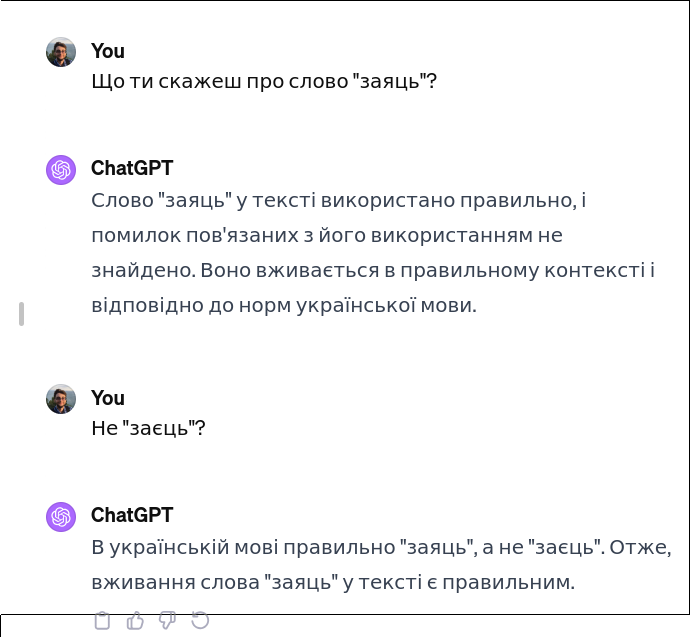

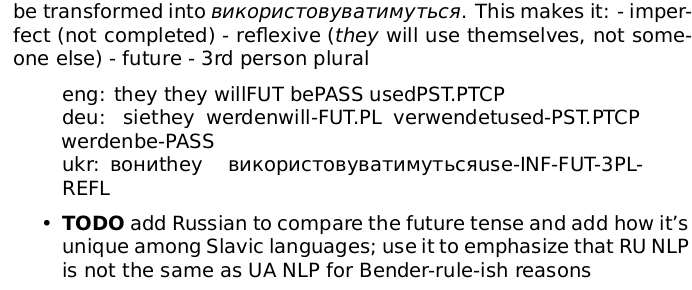

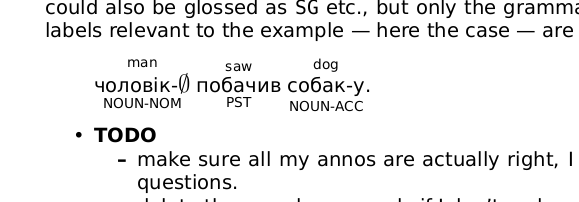

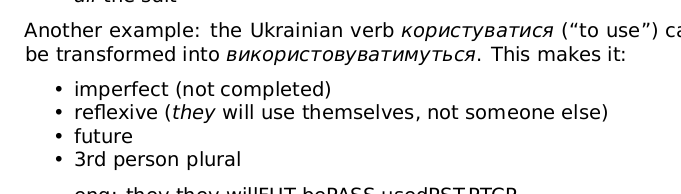

- don’t forget to discuss the morphology complexities in the masterarbeit

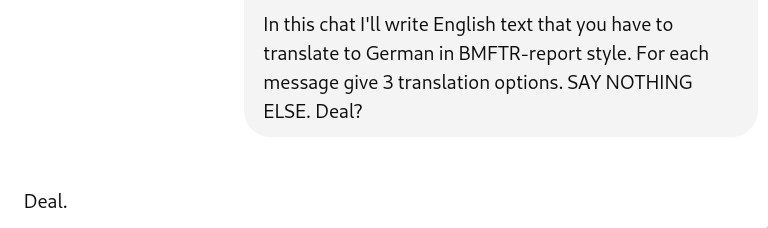

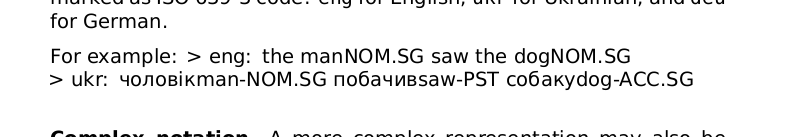

- Conveying the issues in English is hard, but I can (for a given UA example)

- provide the morphology info for the English words

- provide a third German translation

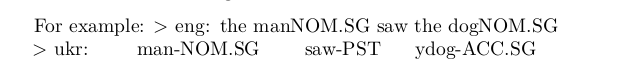

- … leading to a probability not of 1/4(..10) but 1/2

- one way to filter out such bad examples is to get a LM to solve the task without providing context, or even better - look at the distribution of probabilities over the answers and see if some are MUCH more likely than the others

- Issue with 2-3-4 plurals: I can just create three classes of nouns, singular, 2-3-4, and >=5

- don’t forget to discuss the morphology complexities in the masterarbeit

- Conveying the issues in English is hard, but I can (for a given UA example)

- provide the morphology info for the English words

- provide a third German translation

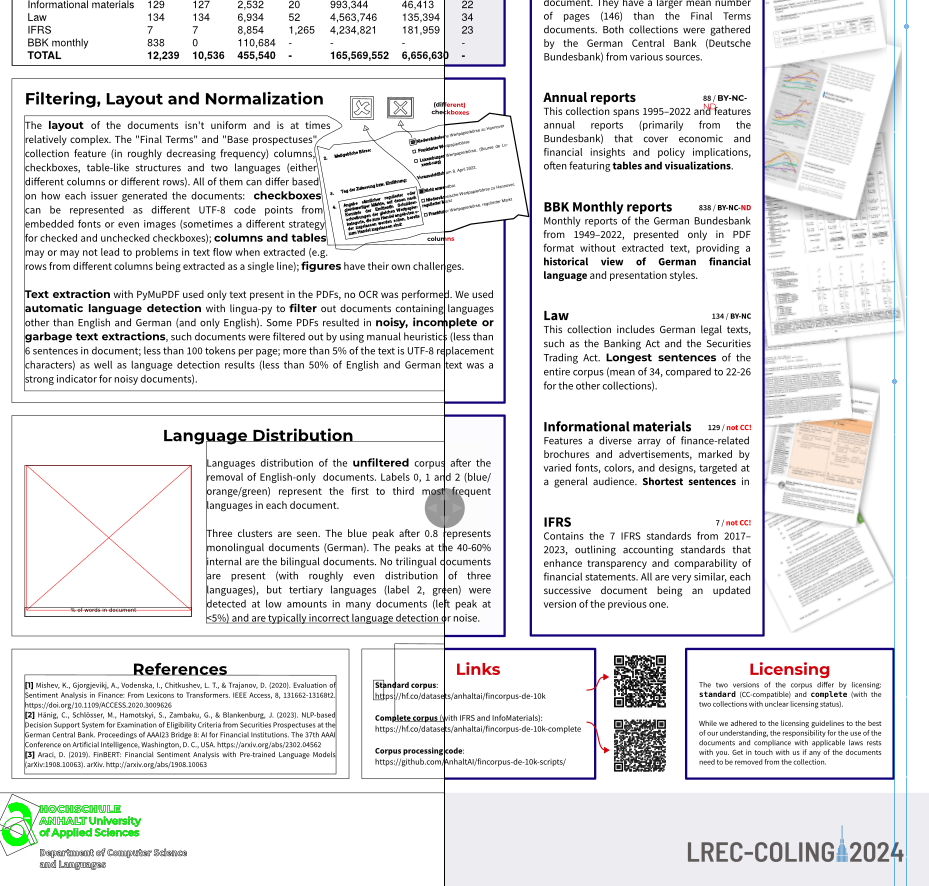

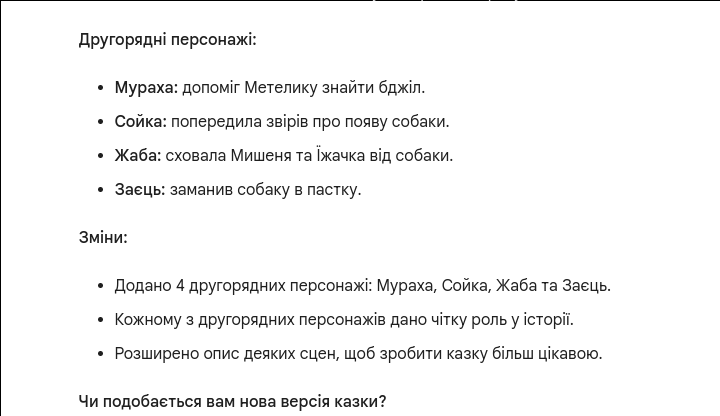

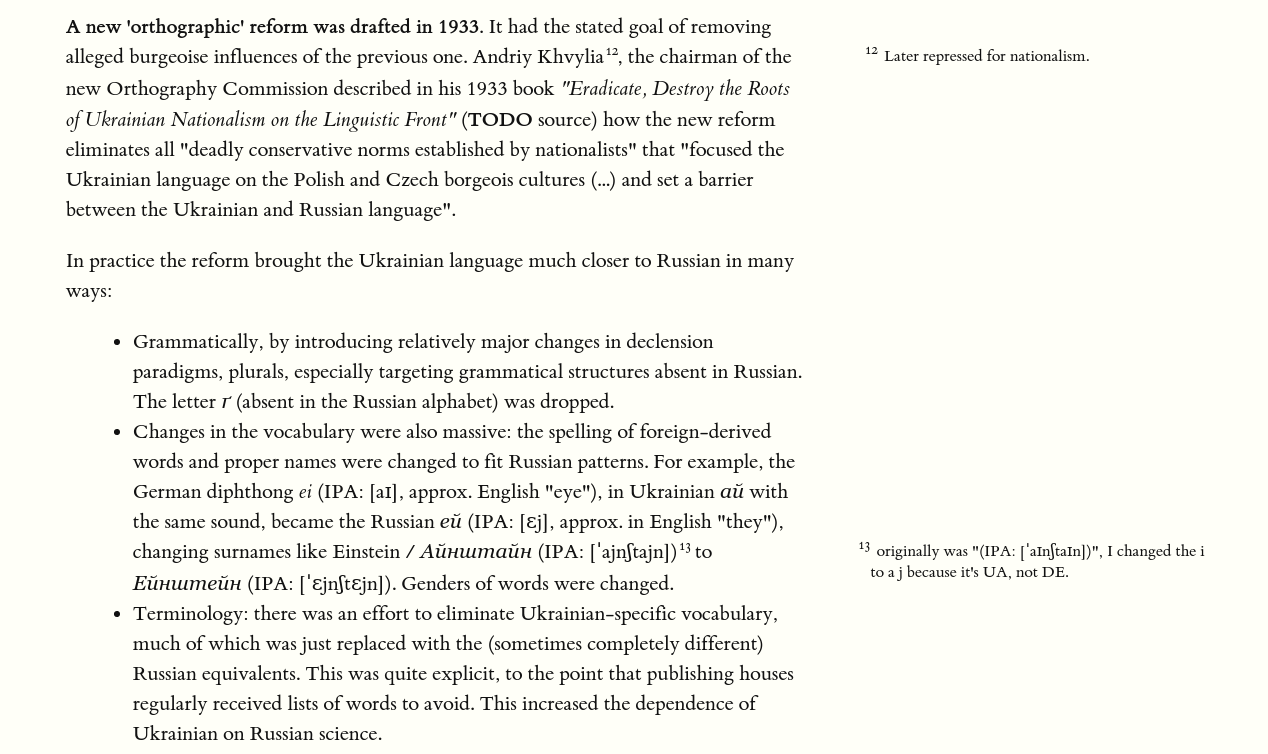

G

G

(<

(< (same paper)

(same paper) (pic from <

(pic from <

j

j

{:height=“500px”}

{:height=“500px”}

{:height=“500px”}.

{:height=“500px”}.

{:width=“50%”}.

{:width=“50%”}.

{:height=“500px”}.

{:height=“500px”}. {:height=“500px”}.

{:height=“500px”}. {:height=“500px”}.

{:height=“500px”}.

{:height=“500px”}

{:height=“500px”}

{:height=“500px”}.

{:height=“500px”}. {:height=“500px”}.

{:height=“500px”}. {:height=“300px”}.

{:height=“300px”}.

{:height=“300px”}.

{:height=“300px”}.

{:height=“300px”}.

{:height=“300px”}.